Editor’s note: This article was last reviewed and updated on 19 November 2024.

There are clear differences between the Go and Rust programming languages.

This article will compare Go and Rust, evaluating each programming language for performance, concurrency, memory management, security features, and the overall developer experience.

The Replay is a weekly newsletter for dev and engineering leaders.

Delivered once a week, it's your curated guide to the most important conversations around frontend dev, emerging AI tools, and the state of modern software.

Originally designed by Google’s engineers, Go was introduced to the public in 2009. It was created to offer an alternative to C++ that was easier to learn and code and was optimized to run on multi-core CPUs.

Since then, Golang usage has been great for developers who want to take advantage of Go’s concurrency. The language provides goroutines, which enable you to run functions as subprocesses. A significant advantage of Go is how easily you can use goroutines. Simply adding the go syntax to a function makes it run as a subprocess.

Go’s concurrency model allows you to deploy workloads across multiple CPU cores, making it a very efficient language:

package main

import (

"fmt"

"time"

)

func f(from string) {

for i := 0; i < 3; i++ {

fmt.Println(from, ":", i)

}

}

func main() {

f("direct")

go f("goroutine")

time.Sleep(time.Second)

fmt.Println("done")

}

Here’s the output of the program:

Rust was designed to be high-performance — in fact, it’s the first answer to “Why Rust?” on the Rust website!

Rust doesn’t have garbage collection; the way it handles memory management means that, unlike Go, it doesn’t need a garbage collector, and references let objects easily get passed around without requiring copies to be made.

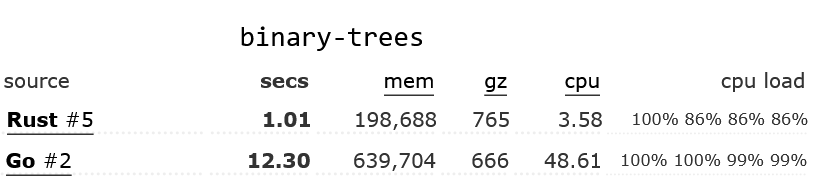

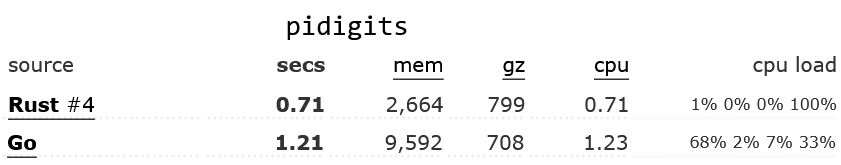

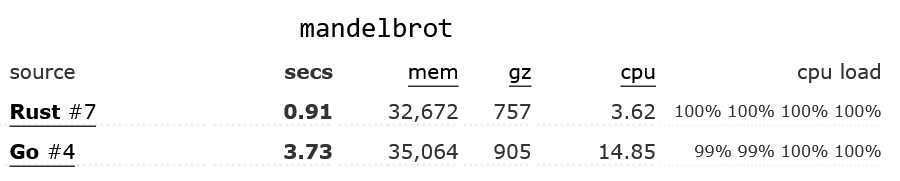

Individual benchmarks can be game-able and tricky to interpret. To address this, the Benchmarks Game allows for multiple programs for each language, comparing each language’s runtime, memory usage, and code complexity to get a better sense of what the tradeoffs are between them.

For all of the tested algorithms, the most optimized Rust code was at least 30 percent faster than the most optimized Go code, and in many cases, it was significantly faster. For the binary-trees benchmark, the most optimized Rust code was 12 times faster than the most optimized Go code!

In many cases, even the least optimized Rust code was faster than the most optimized Go code. Here are a few examples of the most optimized Rust and Go code:

Both Rust and Go are good at scaling up to take advantage of many CPUs to process data in parallel. In Go, you can use a goroutine to process each piece of data, and a WaitGroup to wait for them all to finish. In Rust, rayon is a useful crate that makes it easy to iterate over a container in parallel.

Rust and Go offer compelling features for sensitive environments.

Rust’s zero-cost abstractions and ownership model provide memory safety without the need for garbage collection. This model integrates concepts of ownership, borrowing, and lifetimes to facilitate efficient memory allocation and deallocation.

Rust implements the ownership model by using a built-in borrow checker in its compiler. This checker ensures that data references don’t outlive their corresponding data in memory. If there’s a possibility for this, the compiler will throw an error. This model prevents memory vulnerabilities during compile time.

Another measure Rust takes to ensure safety is its type system, which eliminates null pointers to prevent null reference errors. This is a critical feature because null pointers are a common source of bugs in many programming languages.

However, Rust does provide the option to use unsafe raw pointers in specific situations where null pointers might be necessary. They are useful for low-level operations or interfacing with foreign functions. You should approach these cases with caution, as the compiler doesn’t check unsafe pointers, which can lead to potential safety risks.

Go’s simplicity helps reduce errors that may lead to security vulnerabilities. It uses explicit error checking in favor of exception to ensure proper, predictable, and transparent error handling.

Go’s explicit error checking uses the built-in error type where functions return errors as one of the return values, and you can evaluate and handle errors directly depending on the scenarios. Typically, you’ll call the functions and check if the error is non-nil to handle it accordingly. Read more about error handling in Go here.

Go also has a built-in race detector that helps identify and fix race conditions in concurrent applications, contributing to its security profile. Race conditions occur when multiple threads access shared resources at the same instance, and one of the threads modifies the data. If there isn’t a proper synchronization technique in place, the outcome can be unpredictable and difficult to fix.

You can enable the race detector when you’re building or testing your apps with the -race flag. Go’s race detector operates at runtime, and when it detects a race condition, it provides a detailed report, including the location of the unsynchronized access.

As mentioned above, Go supports concurrency. For example, let’s say you’re running a web server that handles API requests. You can use Go’s goroutines to run each request as a subprocess, maximizing efficiency by offloading tasks to all available CPU cores.

Goroutines are part of Go’s built-in functions, while Rust has only received native async/await syntax to support concurrency. Therefore, in terms of developer experience when handling concurrency, Go has an advantage. However, Rust is much better at guaranteeing memory safety.

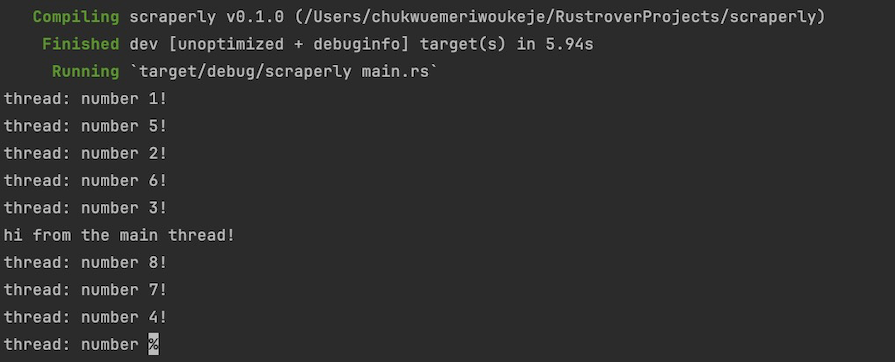

Here’s an example of simplified threads for Rust:

use std::thread;

use std::time::Duration;

fn main() {

// 1. create a new thread

for i in 1..10 {

thread::spawn(move|| {

println!("thread: number {}!", i);

thread::sleep(Duration::from_millis(100));

});

}

println!("hi from the main thread!");

}

Here’s the output of the program:

Concurrency has always been a thorny problem for developers. It’s not an easy task to guarantee memory-safe concurrency without compromising the developer experience. However, this extreme security focus led to the creation of provably correct concurrency.

Rust experimented with the concept of ownership to prevent unsolicited access to resources and prevent memory safety bugs.

Rust offers four different concurrency paradigms to help you avoid common memory safety pitfalls. We’ll take a closer look at two common paradigms in the following sections: channels and locks.

A channel helps transfer a message from one thread to another. While this concept also exists in Go, Rust allows you to transfer a pointer from one thread to another to avoid racing conditions for resources.

Through passing pointers, Rust can enforce thread isolation for channels, reflecting its strong commitment to memory safety within its concurrency model.

In Rust, data is only accessible when the lock is held. Rust relies on the principle of locking data instead of code, which is in contrast to practices commonly seen in programming languages like Java.

The previously mentioned concept of ownership is one of Rust’s key features, elevating type safety to the next level and enabling memory-safe concurrency.

Rust’s “strict” compiler checks the variables you use and their memory references to avoid possible data race conditions. When it detects a possible race condition, it informs you about the undefined behavior.

This feature ensures that you don’t encounter buffer overflows or race conditions; however, this has its disadvantages. You have to be mindful of memory allocation and deallocation principles during development; it can be challenging to maintain constant vigilance over memory safety.

Next, let’s look at the learning curve associated with each language. Go was designed with simplicity in mind. Developers often refer to it as a “boring” language, which is to say that its limited set of built-in features makes it easy to adopt.

Furthermore, Go offers an easier alternative to C++, hiding aspects such as memory safety and memory allocation.

Rust takes another approach, forcing you to think about concepts like memory safety. The concept of ownership and the ability to pass pointers makes Rust a less attractive option to learn. When you’re constantly thinking about memory safety, you’re less productive, and your code is bound to be more complex.

The learning curve for Rust is also pretty steep compared to Go. It’s worth mentioning, however, that Go has a steeper learning curve than more dynamic languages such as Python and JavaScript.

Rust and Go have multiple vibrant, growing communities across technology topics and fields.

Rust’s communities have an inclusive culture that offers extensive documentation, forums, and chat platforms for support off and on development.

The Rust team also conducts an annual survey and a conference named RustConf to provide insights into the state of the community, trends, and topics shaping the language’s evolution.

The Go community, backed by Google, has a large ecosystem and extensive resources ranging from official documentation and a dedicated blog to community-driven conferences like GopherCon.

There are many online forums, GitHub repositories, and Slack channels where you can request support from novice and experienced Go developers.

For modern software developers, being able to iterate quickly is very important, and so is being able to have multiple people working on the same project. Go and Rust achieve these goals in somewhat different ways.

The Go language is very simple to write and understand, which makes it easy for developers to collaborate. However, in Go code, you have to be very careful about error checking and avoiding nil accesses; the compiler doesn’t provide much help here, so you have to implicitly understand which variables might be nil and which ones are guaranteed to be non-nil.

Rust code is trickier to write and compile; developers have to have a good understanding of references, lifetimes, etc., to be successful. However, the Rust compiler does an excellent job of catching these issues (and emitting incredibly helpful error messages — in a recent survey, 90 percent of Rust developers approved of them!).

So while “Once your code compiles, it’s correct!” isn’t true for either language, it’s closer to being true for Rust, and this gives developers greater confidence when iterating on existing code.

Both Rust and Go have a solid assortment of features. As we’ve seen above, Go has built-in support for several useful concurrency mechanisms, namely goroutines and channels. The language supports interfaces and, as of Go v1.18, generics.

However, Go does not support inheritance, method or operator overloading, or assertions. Because Go was developed at Google, it’s no surprise that Go has excellent support for HTTP and other web APIs, and there’s also a large ecosystem of Go packages.

The Rust language is a bit more feature-full than Go; it supports traits (a more sophisticated version of interfaces), generics, macros, and rich built-in types for nullable types and errors, as well as the ? operator for easy error handling.

It’s also easier to call C/C++ code from Rust than it is from Go. Rust also has a large ecosystem of crates.

Go works well for a wide variety of use cases, making it a great alternative to Node.js for creating web APIs. As noted by Loris Cro, “Go’s concurrency model is a good fit for server-side applications that must handle multiple independent requests. This is exactly why Go provides goroutines.

What’s more, Go has built-in support for the HTTP web protocol. You can quickly design a small API using the built-in HTTP support and run it as a microservice. Therefore, Go fits well with the microservices architecture and serves the needs of API developers.

In short, Go is a good fit if you value development speed and prefer syntax simplicity over performance. On top of that, Go offers better code readability, which is an important criterion for large development teams.

Rust is a great choice when performance matters, such as when you’re processing large amounts of data. Rust also gives you fine-grained control over how threads behave and how resources are shared between threads.

On the other hand, Rust comes with a steep learning curve and slows down development speed due to the extra complexity of its memory safety. This is not necessarily a disadvantage; Rust also guarantees you won’t encounter memory safety bugs as the compiler checks every data pointer. For complex systems, this assurance can come in handy.

In choosing between Go and Rust, your decision depends on your project and team’s needs. Go excels in simplicity, concurrent programming, and rapid deployment of APIs, making it ideal for scalable microservices.

Rust, known for high performance, precise memory safety, and lack of garbage collection, is preferred for systems programming, tackling memory-intensive tasks, or leveraging advanced coroutines.

Whether you’re considering what Rust is good for or exploring Golang usage, both languages excel in different ways.

Evaluate your project’s complexity and long-term needs to decide effectively, as Rust and Go each bring unique advantages to the table, which we did our best to cover in this article.

Debugging Rust applications can be difficult, especially when users experience issues that are hard to reproduce. If you’re interested in monitoring and tracking the performance of your Rust apps, automatically surfacing errors, and tracking slow network requests and load time, try LogRocket.

LogRocket lets you replay user sessions, eliminating guesswork around why bugs happen by showing exactly what users experienced. It captures console logs, errors, network requests, and pixel-perfect DOM recordings — compatible with all frameworks.

LogRocket's Galileo AI watches sessions for you, instantly identifying and explaining user struggles with automated monitoring of your entire product experience.

Modernize how you debug your Rust apps — start monitoring for free.

Claude Code vs. OpenCode in a real Next.js refactor: benchmark results, mistakes, prompts, and when to use each CLI agent.

Every time you explain your team’s coding standards to Claude, you are doing work that should be reusable. The same […]

Learn how to move beyond one-shot prompting in Claude with structured workflows for AI-assisted coding, debugging, PR reviews, documentation, testing, and automation.

Learn how to build advanced Next.js forms with rule engines, client-side previews, Server Actions, and server-validated form logic.

Hey there, want to help make our blog better?

Join LogRocket’s Content Advisory Board. You’ll help inform the type of content we create and get access to exclusive meetups, social accreditation, and swag.

Sign up now