From spotting rage-clicks to onboarding struggles, session replay has become a standard tool for understanding how users experience your product. Traditional analytics often miss the why behind user behavior. Video-like replays of real interactions can surface UX issues that metrics alone can’t explain.

But implementing session replay comes with hurdles beyond legality. Product teams need to balance insight with user trust, while also addressing concerns from leadership, legal, and engineering.

As a product manager, you need more than a compliance checklist to launch session replay responsibly. This guide provides practical frameworks, an implementation playbook, and a rollout checklist to help teams adopt session replay without compromising privacy.

Session replay tools capture different types of user actions. Some tools focus on DOM-level signals like clicks, scrolls, and heatmaps. Others provide full video-style replays of user sessions.

Because capabilities vary so widely, you need to understand exactly what data a tool collects and the privacy risk that comes with it. As a rule of thumb, the more sensitive the data, the higher the risk.

High-risk data elements include:

Session replay is inappropriate for high-risk data. Non-eligible actions include financial transactions, medical workflows, and identity verification flows. In these cases, the risk of exposure outweighs the value of UX insight.

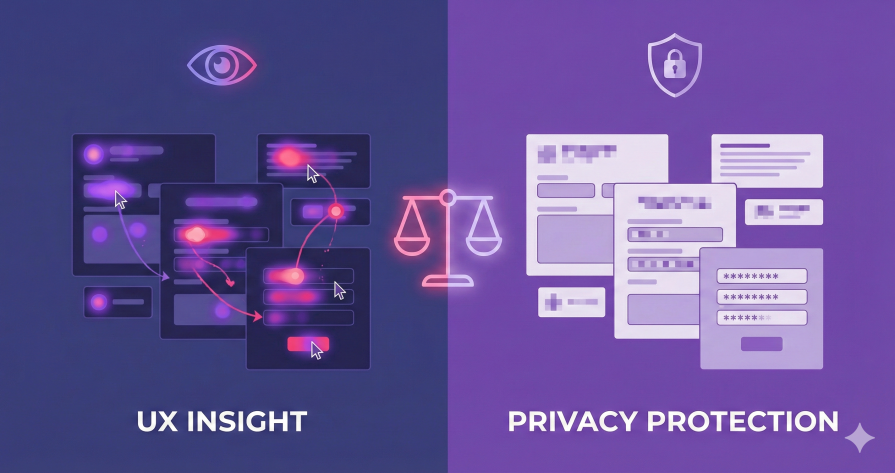

As a product manager, your goal is to roll out session replay without increasing privacy risks. This section outlines a practical decision framework for integrating session replay into your product workflow responsibly.

Start by classifying pages, workflows, and data classes by sensitivity level:

Session replay is inappropriate for high-risk activities such as financial transactions, medical workflows, and identity verification flows. In these cases, the risk of data exposure outweighs the value of UX insight.

You must determine how much data you actually need. The goal should be minimal viable capture, which reduces overall risk while still enabling useful insights.

High-fidelity tools, such as DOM capture with form inputs, provide rich context but increase the likelihood of collecting sensitive data. Low-fidelity options like heatmaps or event logs often surface the same usability issues with far less risk.

For example, if users aren’t completing a form, event logs may reveal where drop-off occurs but not why. That approach is safer but limits your level of insight. A middle ground is session replay with masked inputs, which reveals user behavior and friction without meaningfully increasing data risk.

You should explicitly weigh the value of deeper insight against privacy exposure. Documenting the expected ROI helps justify these tradeoffs to stakeholders.

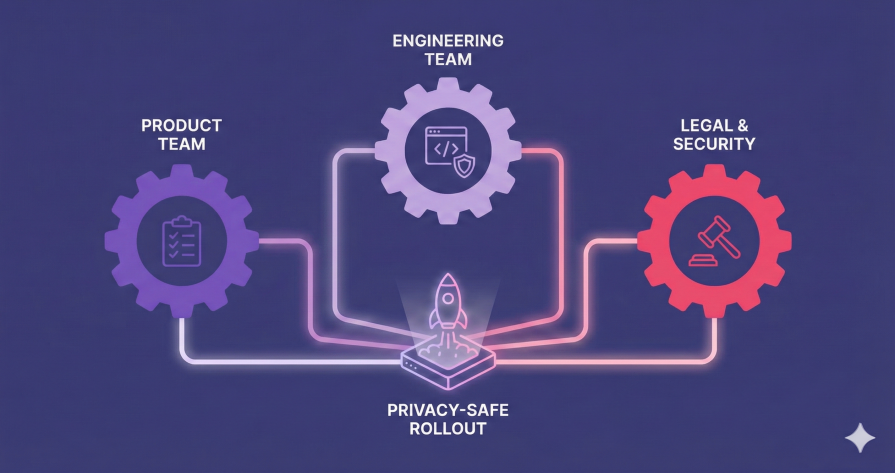

Privacy-safe session replay requires clear ownership across teams. Responsibilities typically break down as follows:

Product managers

Engineering

Legal or security

You should establish multiple sign-off checkpoints throughout implementation to maintain alignment and trust. Common checkpoints include:

Implementing session replay involves many moving parts. This playbook outlines what to configure, what to avoid, and how to validate your setup before launch.

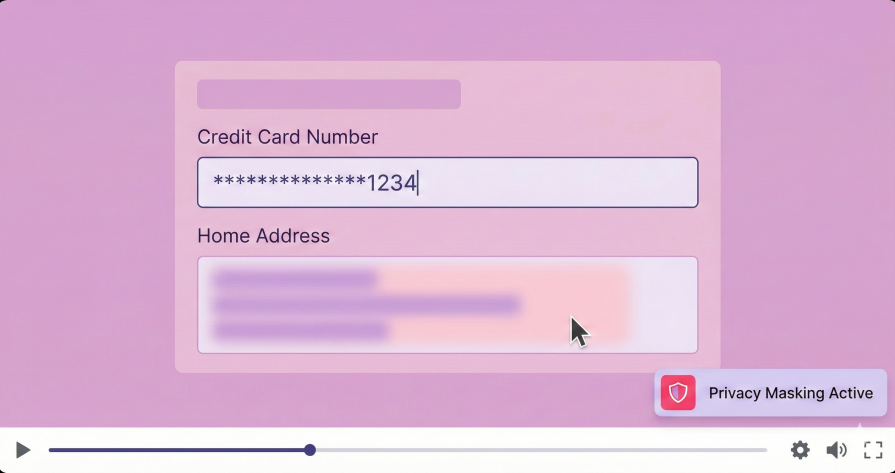

Privacy protections should be enforced from day one. Common safe defaults include:

Misconfigured session replay can introduce risk instead of reducing it. Avoid these common anti-patterns like:

Before launching, test privacy configurations to ensure they behave as intended. Create a checklist for what should happen and the results. For example:

| Test case | Expected result | Pass / fail |

| PII form submit | Fields show “****” | |

| Admin route | No replay generated | |

| Authentication token | Token stripped from network requests | |

| Rage click error | Interaction captured with masking applied | |

| Mobile view | Gestures captured, no text recorded |

QA testing typically involves using test accounts with synthetic data. A common workflow includes:

These results also give you a concrete way to demonstrate both the value and safety of session replay during stakeholder demos.

Different teams will have different concerns about adopting session replay. As a PM, your role is to address those concerns directly while clearly articulating the value session replays provide.

Legal or security teams focus on compliance, risk, and adherence to industry standards. Session replay tools may initially be viewed as unnecessary exposure rather than added value.

Gaining approval involves demonstrating that privacy safeguards are built into both the tooling and the process. You should be prepared to discuss privacy-first features and policies in concrete terms. Legal teams typically want clarity on:

Engineering buy-in comes from framing session replay in terms of architecture, effort, and impact. Be clear about how replays integrate with the existing stack and set realistic expectations for implementation.

For example:

Position session replay as a practical enabler for UX improvements, not a monitoring layer. Replays make it easier to tie usability issues to measurable outcomes like reduced drop-off or fewer support tickets, helping teams prioritize the work without friction.

Leadership may view session replay as an added cost or potential privacy risk. Proactively address both concerns by explaining the safeguards in place and the business value of responsible data collection.

Privacy-focused session replay helps teams diagnose issues faster, understand real user behavior, and resolve bugs more effectively. These insights directly improve engagement and retention by showing exactly where experiences break down.

Over time, this ability to make better, faster, data-informed decisions becomes a competitive advantage that justifies the investment.

Even well-intentioned teams can ship misconfigured session replays. Below are common scenarios that lead to preventable privacy incidents.

An ecommerce site launched session replay without excluding checkout forms. Real credit card numbers appeared in roughly two percent of recorded sessions and were only discovered during a security audit. Basic pre-launch QA testing would’ve surfaced the issue before production.

A SaaS platform introduced a new identity verification flow but failed to update its session replay configuration. User IDs and uploaded documents were captured through unmasked file inputs. A configuration review tied to feature deployment would likely have prevented the exposure.

A post-launch plan helps prevent these common failure modes. It ensures replay configurations remain accurate as the product evolves. PMs should monitor:

Use this step-by-step checklist to roll out session replay responsibly and with confidence:

Session replay only works when privacy is treated as a first-order requirement from day one. With the right configurations and guardrails in place, replay tools can deliver meaningful insight into UX and user behavior without compromising user trust.

As a product manager, you play a critical role in making that balance work. By setting clear boundaries, enforcing privacy-safe defaults, and aligning teams early, you can turn session replay into a trust-preserving analytics capability rather than a liability.

Use this guide to launch session replay responsibly, address stakeholder concerns with confidence, and build product insights that earn and retain user trust.

Featured image source: IconScout

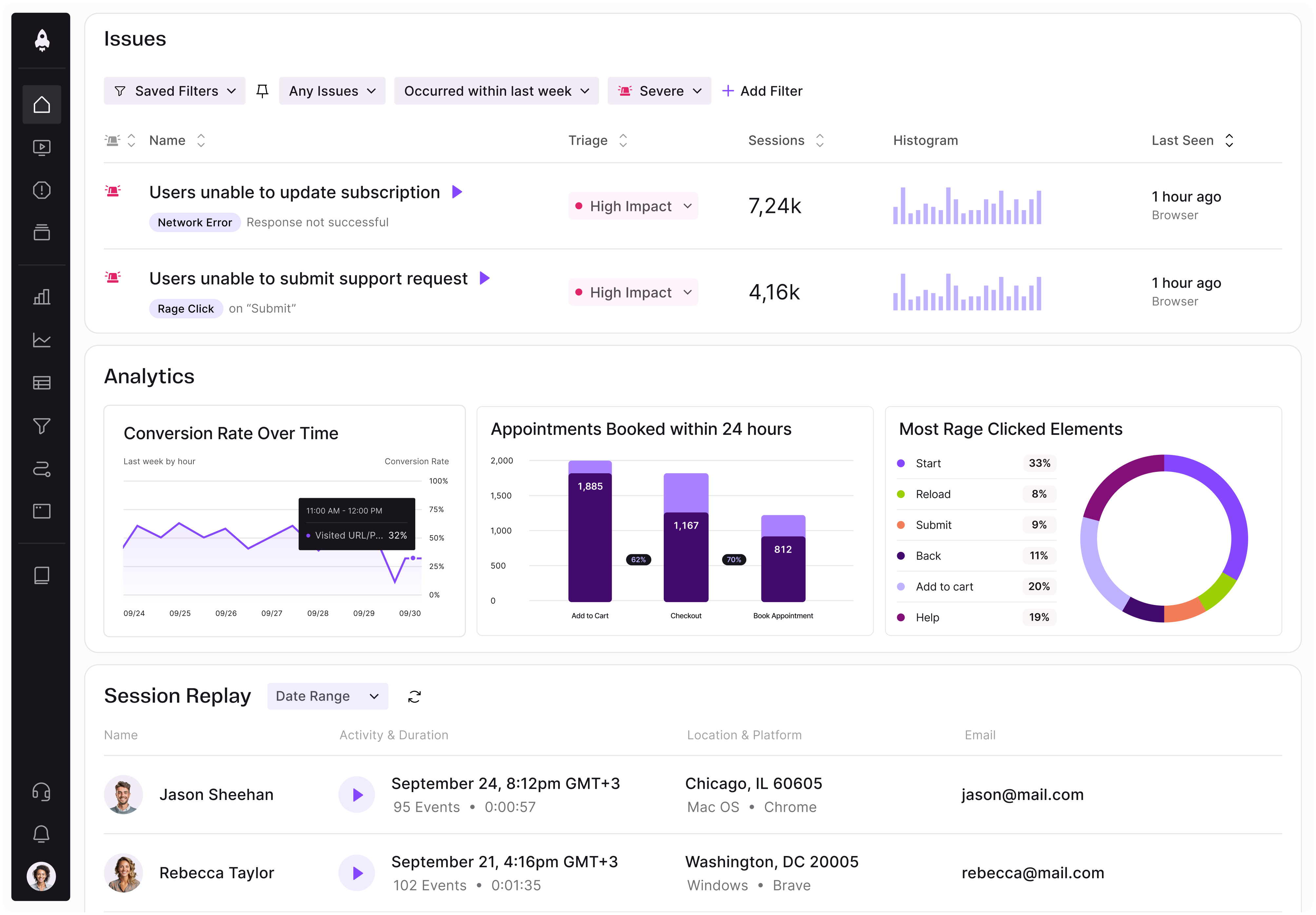

LogRocket identifies friction points in the user experience so you can make informed decisions about product and design changes that must happen to hit your goals.

With LogRocket, you can understand the scope of the issues affecting your product and prioritize the changes that need to be made. LogRocket simplifies workflows by allowing Engineering, Product, UX, and Design teams to work from the same data as you, eliminating any confusion about what needs to be done.

Get your teams on the same page — try LogRocket today.

Learn how to spot PMF erosion early, diagnose the cause, and help your product recover before decline turns into panic.

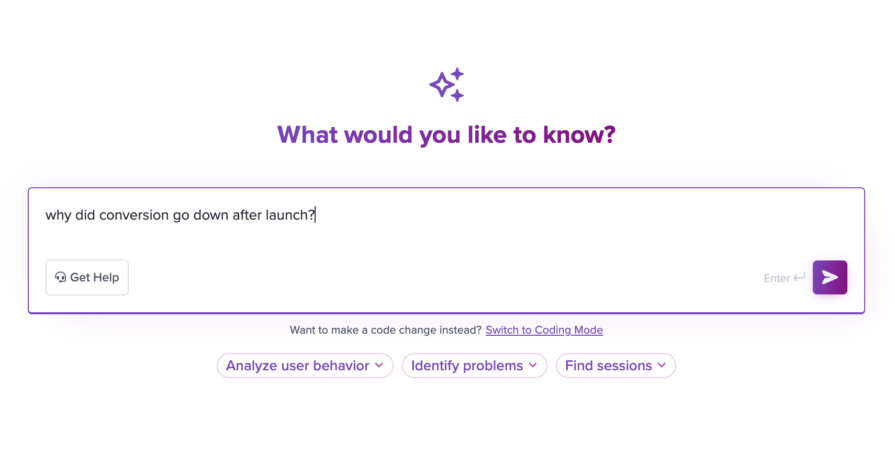

Introducing Ask Galileo: AI that answers any question about your users’ experience in seconds by unifying session replays, support tickets, and product data.

The rise of the product builder. Learn why modern PMs must prototype, test ideas, and ship faster using AI tools.

Rahul Chaudhari covers Amazon’s “customer backwards” approach and how he used it to unlock $500M of value via a homepage redesign.