Hypothesis validation is the bread and butter of product discovery. Understanding what should be prioritized and why is the most important task of a product manager. It doesn’t matter how well you validate your findings if you’re trying to answer the wrong question.

A question is as good as the answer it can provide. If your hypothesis is well written, but you can’t read its conclusion, it’s a bad hypothesis. Alternatively, if your hypothesis has embedded bias and answers itself, it’s also not going to help you.

There are several different tools available to build hypotheses, and it would be exhaustive to list them all. Apart from being superficial, focusing on the frameworks alone shifts the attention away from the hypothesis itself.

In this article, you will learn what a hypothesis is, the fundamental aspects of a good hypothesis, and what you should expect to get out of one.

Mitigating the four product risks is the reason why product managers exist in the first place and it’s where good hypothesis crafting starts.

The four product risks are assessments of everything that could go wrong with your delivery. Our natural thought process is to focus on the happy path at the expense of unknown traps. The risks are a constant reminder that knowing why something won’t work is probably more important than knowing why something might work.

These are the fundamental questions that should fuel your hypothesis creation:

Is this hypothesis the best one to validate now? Is this the most cost-effective initiative we can take? Will this answer help us achieve our goals? How much money can we make from it?

Has the user manifested interest in this solution? Will they be able to use it? Does it solve our users’ challenges? Is it aesthetically pleasing? Is it vital for the user, or just a luxury?

Do we have the resources and know-how to deliver it? Can we scale this solution? How much will it cost? Will it depreciate fast? Is it the best cost-effective solution? Will it deliver on what the user needs?

Is this solution safe both for the user and for the business? Is it inclusive enough? Is there a risk of public opinion whiplash? Is our solution enabling wrongdoers? Are we jeopardizing some to privilege others?

There is an infinite amount of questions that can surface from these risks, and most of those will be context dependent. Your industry, company, marketplace, team composition, and even the type of product you handle will impose different questions, but the risks remain the same.

Assuming you came up with a hefty batch of risks to validate, you must now address them. To address a risk, you could do one of three things: collect concrete evidence that you can mitigate that risk, infer possible ways you can mitigate a risk and, finally, deep dive into that risk because you’re not sure about its repercussions.

This three way road can be illustrated by a CSD matrix:

Everything you’re sure can help you to mitigate whatever risk. An example would be, on the risk “how to build it,” assessing if your engineering team is capable of integrating with a certain API. If your team has made it a thousand times in the past, it’s not something worth validating. You can assume it is true and mark this particular risk as solved.

To put it simply, a supposition is something that you think you know, but you’re not sure. This is the most fertile ground to explore hypotheses, since this is the precise type of answer that needs validation. The most common usage of supposition is addressing the “is it relevant for the user” risk. You presume that clients will enjoy a new feature, but before you talk to them, you can’t say you are sure.

Doubts are different from suppositions because they have no answer whatsoever. A doubt is an open question about a risk which you have no clue on how to solve. A product manager that tries to mitigate the “is it ethical to deliver” risk from an industry that they have absolute no familiarity with is poised to generate a lot of doubts, but no suppositions or certainties. Doubts are not good hypothesis sources, since you have no idea on how to validate it.

A hypothesis worth validating comes from a place of uncertainty, not confidence or doubt. If you are sure about a risk mitigation, coming up with a hypothesis to validate it is just a waste of time and resources. Alternatively, trying to come up with a risk assessment for a problem you are clueless about will probably generate hypotheses disconnected with the problem itself.

That said, it’s important to make it clear that suppositions are different from hypotheses. A supposition is merely a mental exercise, creativity executed. A hypothesis is a measurable, cartesian instrument to transform suppositions into certainties, therefore making sure you can mitigate a risk.

A good hypothesis comes from a supposed solution to a specific product risk. That alone is good enough to build half of a good hypothesis, but you also need to have measurable confidence.

You’ll rarely transform a supposition into a certainty without an objective. Returning to the API example we gave when talking about certainties, you know the “can we build it” risk doesn’t need validation because your team has made tens of API integrations before. The “tens” is the quantifiable, measurable indication that gives you the confidence to be sure about mitigating a risk.

What you need from your hypothesis is exactly this quantifiable evidence, the number or hard fact able to give you enough confidence to treat your supposition as a certainty. To achieve that goal, you must come up with a target when creating the hypothesis. A hypothesis without a target can’t be validated, and therefore it’s useless.

Imagine you’re the product manager for an ecommerce app. Your users are predominantly mobile users, and your objective is to increase sales conversions. After some research, you came across the one click check-out experience, made famous by Amazon, but broadly used by ecommerces everywhere.

You know you can build it, but it’s a huge endeavor for your team. You best make sure your bet on one click check-out will work out, otherwise you’ll waste a lot of time and resources on something that won’t be able to influence the sales conversion KPI.

You identify your first risk then: is it valuable to the business?

Literature is abundant on the topic, so you are almost sure that it will bear results, but you’re not sure enough. You only can suppose that implementing the one click functionality will increase sales conversion.

During case study and data exploration, you have reasons to believe that a 30 percent increase of sales conversion is a reasonable target to be achieved. To make sure one click check-out is valuable to the business then, you would have a hypothesis such as this:

We believe that if we implement a one-click checkout on our ecommerce, we can grow our sales conversion by 30 percent

This hypothesis can be played with in all sorts of ways. If you’re trying to improve user-experience, for example, you could make it look something like this:

We believe that if we implement a one-click checkout on our ecommerce, we can reduce the time to conversion by 10 percent

You can also validate different solutions having the same criteria, building an opportunity tree to explore a multitude of hypothesis to find the better one:

We believe that if we implement a user review section on the listing page, we can grow our sales conversion by 30 percent

Sometimes you’re clueless about impact, or maybe any win is a good enough win. In that case, your criteria of validation can be a fact rather than a metric:

We believe that if we implement a one-click checkout on our ecommerce, we can reduce the time to conversion

As long as you are sure of the risk you’re mitigating, the supposition you want to transform into a certainty, and the criteria you’ll use to make that decision, you don’t need to worry so much about “right” or “wrong” when it comes to hypothesis formatting.

That’s why I avoided following up frameworks on this article. You can apply a neat hypothesis design to your product thinking, but if you’re not sure why you’re doing it, you’ll extract nothing out of it.

The final piece of this puzzle comes after the hypothesis crafting. A hypothesis is only as good as the validation it provides, and that means you have to test it.

If we were to test the first hypothesis we crafted, “we believe that if we implement a one-click checkout on our ecommerce, we can grow our sales conversion by 30 percent,” you could come up with a testing roadmap to build up evidence that would eventually confirm or deny your hypothesis. Some examples of tests are:

A/B testing — Launch a quick and dirty one-click checkout MVP for a controlled group of users and compare their sales conversion rates against a control group. This will provide direct evidence on the effect of the feature on sales conversions

Customer support feedback — Track any inquiries or complaints related to the checkout process. You can use organic user complaints as an indirect measure of latent demand for one-click checkout feature

User survey — Ask why carts were abandoned for a cohort of shoppers that left the checkout step close to completion. Their reasons might indicate the possible success of your hypothesis

Effective hypothesis crafting is at the center of product management. It’s the link between dealing with risks and coming up with solutions that are both viable and valuable. However, it’s important to recognize that the formulation of a hypothesis is just the first step.

The real value of a hypothesis is made possible by rigorous testing. It’s through systematic validation that product managers can transform suppositions into certainties, ensuring the right product decisions are made. Without validation, even the most well-thought-out hypothesis remains unverified.

Featured image source: IconScout

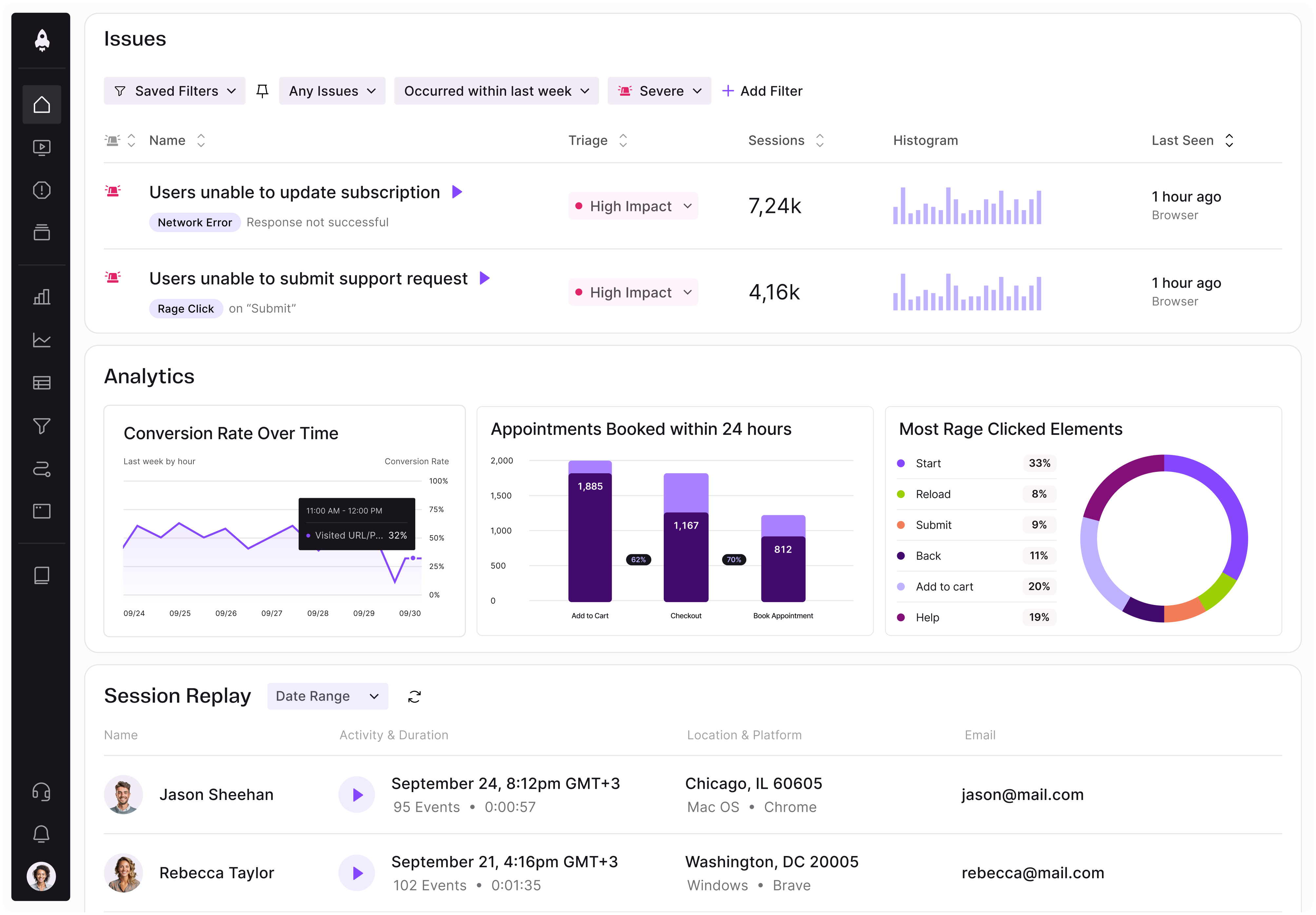

LogRocket identifies friction points in the user experience so you can make informed decisions about product and design changes that must happen to hit your goals.

With LogRocket, you can understand the scope of the issues affecting your product and prioritize the changes that need to be made. LogRocket simplifies workflows by allowing Engineering, Product, UX, and Design teams to work from the same data as you, eliminating any confusion about what needs to be done.

Get your teams on the same page — try LogRocket today.

AI can make PM thinking generic. Learn how to use it without losing judgment, user nuance, or product differentiation.

Learn a 3-lens framework that helps product managers shift stakeholder requests from feature ideas to real problems and outcomes.

CPO and PhD Jen Wang covers “The Zone of Absorption” and why product teams are struggling to build when AI is shifting faster than anyone can keep up.

Explore four product team structures, when each works best, and how to choose the right model for speed, ownership, and clarity.