For years, product managers have led teams with the belief that shipping more features creates more value.

That belief often sends teams in the wrong direction. Output does not automatically translate into impact. Completing story poiLearn how product managers can move from output tracking to outcome-driven product management with metrics tied to user impact.nts, closing tickets, and increasing sprint velocity can show steady activity, but none of those signals confirm that your product is delivering better outcomes for users.

This is where many teams get stuck. They reward motion instead of asking whether anything meaningful changed. A team can ship quickly, stay busy, and still fall short of creating real customer value.

Outputs show what the team delivered. Outcomes show what changed because of that work. If your main question is, “What features did we release?” replace it with, “What improved after we released them?”

A common feature factory mistake starts with a goal like “improve onboarding.” Instead of treating that goal as a behavior change to influence, teams turn it into a delivery task. They launch a new onboarding flow, yet activation stays flat because the work focuses on shipping something new rather than removing the friction that keeps users from reaching value.

In this article, you’ll learn how to shift from output tracking to outcome ownership and how to focus on metrics that reflect real user behavior, product value, and business impact.

Most PMs agree that outcomes matter. The problem is few can define what that means in practice.

Outcome-driven product management focuses on changing user behavior, not just completing a feature. That shift changes your day-to-day focus from shipping work to creating measurable impact.

Outputs are the things a development team produces, such as APIs, UI updates, model improvements, or backlog tickets. Outcomes are the changes those outputs create, such as higher activation, faster task completion, lower support volume, or stronger retention.

The test is simple. If your job is to deliver features, you’re still operating with an output mindset. If your job is to change user behavior in a measurable way, you’re working from an outcome-driven one.

I learned this firsthand in one of my product domains. Our backlog was full, and we were trying to improve too many features at once. We kept getting a request from customers to improve the distance matrix. We delivered the feature they asked for, but the complaints continued. Our usage data and internal metrics suggested the feature was working, so the feedback didn’t make sense on the surface.

We decided to visit customers and observe the workflow in person.

As we listened, customers repeated the same complaint that users were walking longer distances than they should, and the distance matrix seemed to be the cause. Then we watched users interact with the feature.

What we found surprised us. The distance matrix worked correctly. The real problem was that the product only showed the next location, not where to turn or how to get there. Users chose their own path and often took a longer route.

We added a small directional cue that showed users where to turn to reach the next destination. Complaints dropped almost immediately.

That experience made the lesson clear. When you focus only on delivering the requested feature, you can miss the actual source of value. We solved the request first. We solved the real problem only after we observed the behavior we wanted to change.

After that, we started reframing feature requests as outcome statements. For example:

This kind of framing makes success measurable. It forces the team to define what should change, by how much, and for whom. It also helps teams avoid solution-first thinking, where the request gets treated as the answer instead of a starting point for investigation.

When teams work from outcomes, they explore multiple solutions, validate assumptions earlier, observe the problem in context, and measure whether customer behavior actually changed.

That’s the real difference between moving fast and moving the metrics that matter.

If you want to work in an outcome-driven way, you need to choose the right metric first. A strong outcome metric describes the user behavior you want to change and the product impact that should follow. It does not describe what your team shipped

Strong metrics usually share a few core characteristics:

The right metric depends on the problem you’re trying to solve.

If you’re helping users experience value for the first time, focus on an activation metric. If users leave too early or fail to come back, focus on retention. If the workflow feels slow, manual, or frustrating, look at efficiency metrics. If your goal is monetization, a long-term value metric may make more sense.

The key is to match the metric to the behavior you want to change, not to the feature you plan to release.

There are a few common mistakes to avoid when selecting metrics:

A simple test can save weeks of wasted effort: if improving the metric wouldn’t improve the product or the business, it isn’t an outcome metric.

When you choose the right metric, you create a clearer point of view for your team. You sharpen prioritization and make it easier to have honest conversations about whether your product is actually creating value.

Defining the right outcome and metric is the easy part. The harder part is making sure you can measure it correctly. If you can’t observe whether a feature changed user behavior, the work you spent on selecting your outcome breaks down fast.

That’s why you can’t wait until launch to think about measurement. You need to understand how you’re going to track analytics from the start.

Before you ship, ask yourself three key questions:

Once you can answer those questions clearly, you move beyond surface-level activity and start working on a measurable problem.

One common mistake is treating page views or screen loads as outcome metrics. These signals can show where users go, but they rarely tell you whether your design solved the problem. On their own, they’re weak indicators of success.

Another common mistake is measuring behavior without segmentation. You shouldn’t expect the same impact across every user group. Role, context, and workflow can all shape the outcome. If you look at the wrong group, you may conclude that a feature failed when it actually worked for the users it was meant to help.

I ran into this during a screen-replatforming effort. We reviewed usage data for filters and actions and decided to remove several features because usage looked low. After release, operations team leads reached out and asked where those features had gone. The issue wasn’t low value. The issue was incomplete segmentation.

Team leads used those capabilities to review and assign work, but our analysis grouped users by domain and ignored roles. That experience made it clear that our outcome metrics needed another layer of segmentation.

Teams also run into trouble when they only track the top-line outcome metric and ignore friction points. You may know that the metric isn’t moving, yet still have no idea why. That usually leads to guessing.

To avoid this, look towards the moments where your users struggle. Track drop-offs, delays, repeated actions, and points of confusion. These help you understand why the outcome is moving or stalling.

Many teams discover instrumentation gaps too late. Events are missing, definitions are inconsistent, and the data becomes hard to trust. When that happens, you don’t need to throw the work away, but you do need to fix the measurement system

AI can help here. It can’t recover data you never captured, but it can help you spot what’s missing. You can use it to detect anomalies, surface unusual behavior patterns, and identify instrumentation gaps or inconsistencies. Instead of checking dashboards manually, have an AI flag where something changed and where you should investigate next.

Good instrumentation requires reliable enough data to help you understand user behavior and whether your product is moving in the intended direction.

Once you start measuring outcomes, the next step is to redesign how you structure your roadmap. Many teams struggle to fully adopt an outcome-driven approach because their roadmaps still focus on what to build instead of change.

An outcome-driven roadmap is a set of hypotheses, not a delivery plan filled with items like build feature X, improve UI Y, or refactor domain Z. If a roadmap item doesn’t explain why the work matters or what behavior should change as a result, measurement alone will not get you very far.

A better roadmap question is: what behavior do you need to change to create value?

That shift matters because one behavior can have multiple possible solutions. Your team can then evaluate those options based on effort, risk, performance, or security constraints. If your roadmap only captures the solution, you may still ship something useful, but you’ll have a harder time understanding why it worked or whether a better option existed.

Frameworks like OKRs, North Star metrics, and HEART can help you structure this thinking. They only work, though, if you already understand the outcome you’re trying to drive. Without a clear behavioral hypothesis, those frameworks can easily turn into output tracking with more formal language.

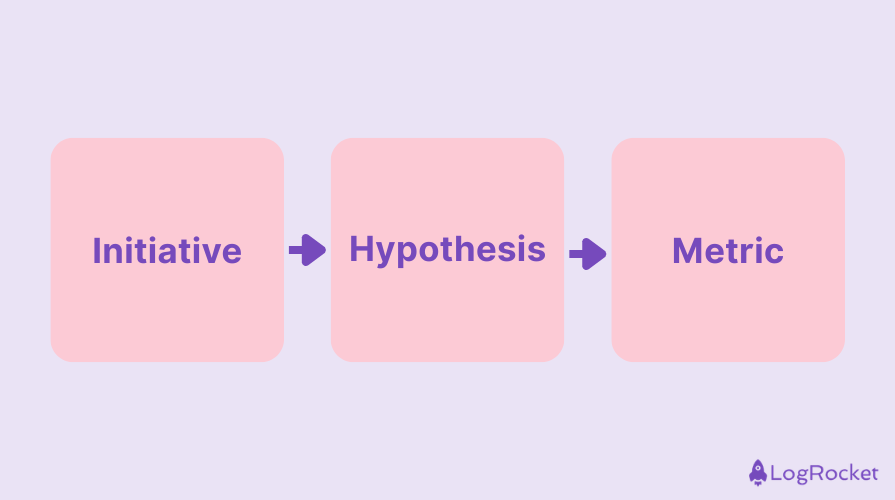

A strong initiative connects three things:

For example, you might start with an initiative like “simplify the checkout screen.” Your hypothesis could be: If the screen is easier to navigate, users will complete checkout faster. Your validating metric could then be: reduce checkout time from X to Y.

The same structure works in other contexts. If your initiative is “improve alert configuration,” your hypothesis might be: If alert setup is easier, teams will create more alerts and catch issues earlier. The validating metric could be: reduce mean time to detection from X to Y.

When you design roadmap items this way, your planning conversations improve. Instead of debating features in isolation, you start debating assumptions, user behavior, and the metrics that’ll prove whether the work succeeded. That creates a more empowered team because the conversation shifts from delivering requests to solving problems.

Over time, this also builds trust. Stakeholders ask fewer questions about when a feature will be completed and more questions about whether it worked. That’s a much healthier place for a product team to operate.

Once you start building around outcomes, the next challenge is validating whether those outcomes are actually happening and whether they matter to customers. This is where experimentation, communication, and discipline start to work together.

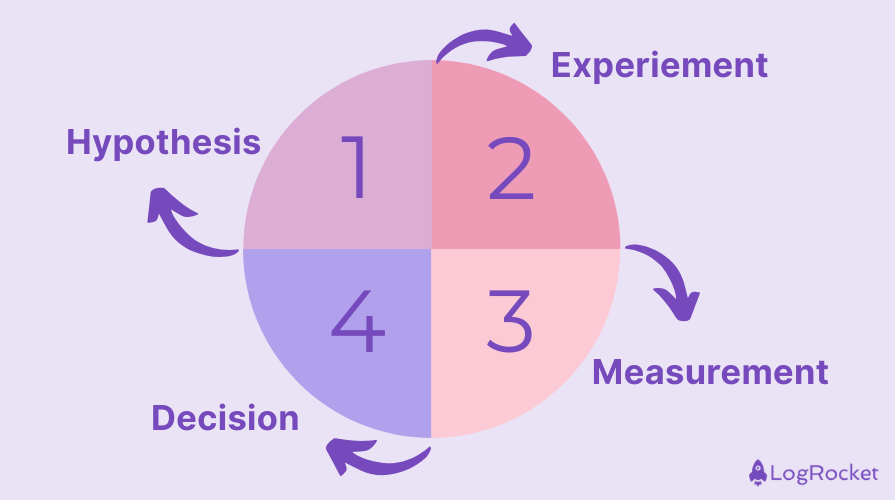

As an outcome-driven product manager, you’ll repeat the same cycle again and again:

The goal of an experiment is to reduce uncertainty. In practice, that usually means your experiments don’t need to be as complex as they sound.

Here are some simple experiment examples you can test easily:

What matters most is that the experiment connects directly to a behavioral change and a measurable metric. Many experiments fail not because the idea was wrong, but because the setup was weak. If your sample is too small, your metric is poorly chosen, or your success criteria are unclear, the result will not tell you much.

Another common trap is expecting results too quickly, especially when behavior takes time to change. When outcomes look inconclusive, don’t rush to call the experiment a failure. Start by asking whether the hypothesis, measurement, or execution needs to change.

Negative results matter just as much as positive ones. They show you what doesn’t change behavior and help your team avoid investing further in low-impact work. Outcome-driven teams treat those results as learning, not as setbacks.

Communicating outcomes matters just as much as measuring them. In an output-focused environment, teams often report progress in terms like “we released feature X,” “we will build Y next,” or “we closed Z tickets.” Outcome-focused communication shifts that language. You report what changed, such as “activation improved by 12 percent” or “time to complete dropped by 18 percent.”

Try using this simple communication structure:

This structure keeps stakeholders aligned on why you made a decision, what you learned, and what happens next without overwhelming them with detail.

You can also use AI in a lightweight way at this stage. AI-generated summaries can turn raw metrics and experiment results into clear, consistent updates. That can save you time and help your team and stakeholders stay aligned without creating more reporting overhead.

As a product manager, your job goes beyond defining outcomes. You need to help build a culture that works this way consistently.

An outcome-driven mindset does not happen all at once. You do not need to redesign your entire process from day one. Start with one initiative, one clear outcome, and one well-instrumented metric.

Over time, that approach becomes a habit. Roadmaps get clearer. Team conversations get more honest. Decisions become easier to explain and defend. The PM role also starts to shift from feature coordinator to owner of behavior change and product impact.

To build an outcome-driven product, you need to be ready for uncertainty, learning, and accountability. That also means letting go of the comfort that delivery metrics can create.

Stop moving fast for the sake of motion. Start moving the metrics that truly matter.

Featured image source: IconScout

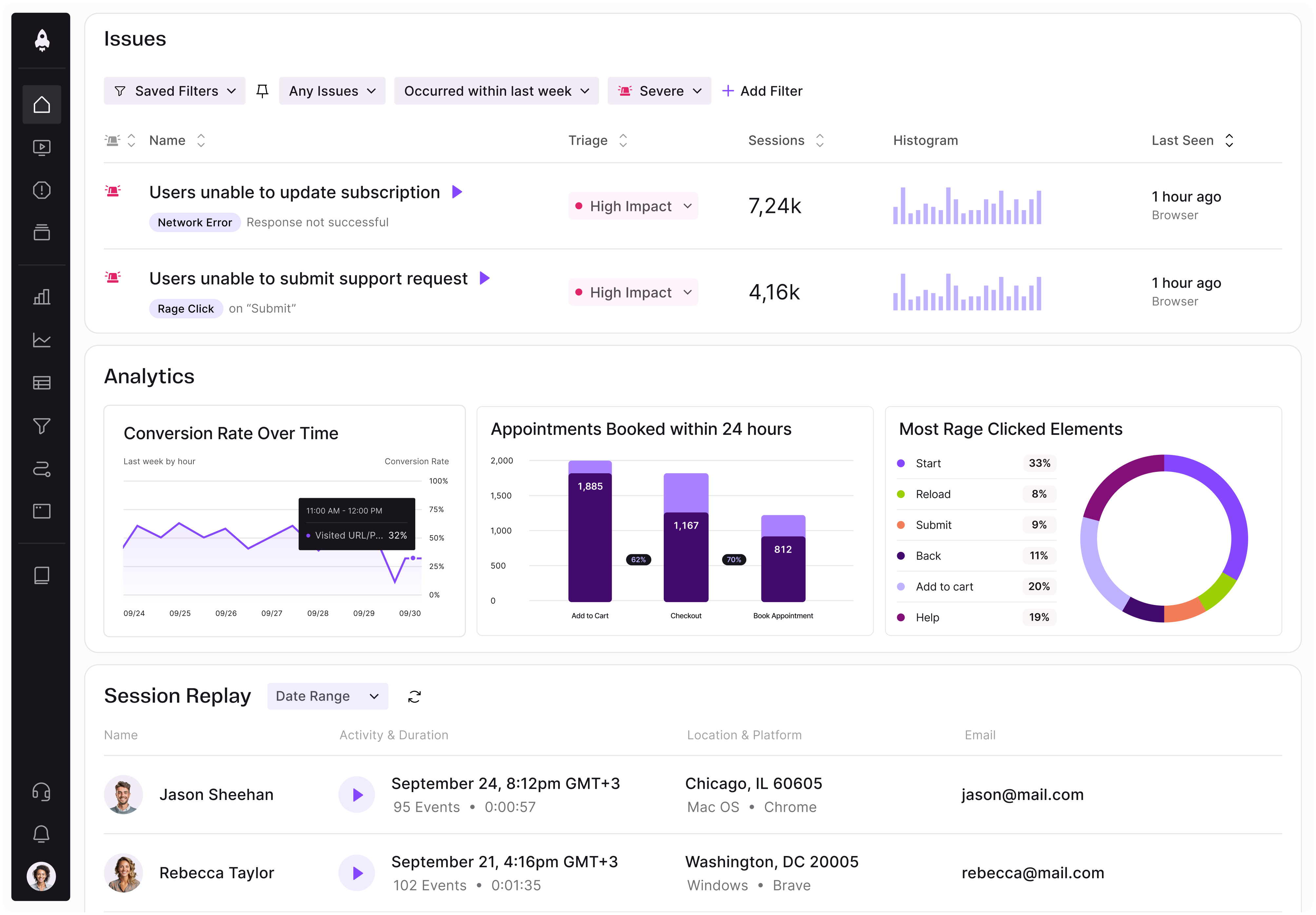

LogRocket identifies friction points in the user experience so you can make informed decisions about product and design changes that must happen to hit your goals.

With LogRocket, you can understand the scope of the issues affecting your product and prioritize the changes that need to be made. LogRocket simplifies workflows by allowing Engineering, Product, UX, and Design teams to work from the same data as you, eliminating any confusion about what needs to be done.

Get your teams on the same page — try LogRocket today.

Learn how code-style reasoning helps product managers make sharper decisions, surface edge cases, and write clearer requirements.

A practical framework for product leaders to prioritize better, reduce noise, and focus teams on what matters most.

Explore how urban planning helps product managers think in systems, strengthen foundations, and build products that scale well.

Learn how to spot PMF erosion early, diagnose the cause, and help your product recover before decline turns into panic.