Learning how to use Lambda functions written in Rust is an extremely useful skill for devs today. AWS Lambda provides an on-demand computing service without needing to deploy or maintain a long-running server, removing the need to install and upgrade an operating system or patch security vulnerabilities.

In this article, we’ll learn how to create and deploy a Lambda function written in Rust. All of the code for this project is available on my GitHub for your convenience.

Jump Ahead:

watchcommand

The Replay is a weekly newsletter for dev and engineering leaders.

Delivered once a week, it's your curated guide to the most important conversations around frontend dev, emerging AI tools, and the state of modern software.

One issue that has persisted with Lambda over the years has been the cold start problem.

A function cannot spin up instantly, as it requires usually a couple of seconds or longer to return a response. Different programming languages have different performance characteristics; some languages like Java will have much longer cold start times because of their dependencies, like the JVM.

Rust on the other hand has extremely small cold starts and has become a programming language of choice for developers looking for significant performance gains by avoiding larger cold starts.

Let’s jump in.

To begin, we’ll need to install cargo with rustup and cargo-lambda. I will be using Homebrew because I’m on a Mac, but you can take a look at the previous two links for installation instructions on your own machine.

curl https://sh.rustup.rs -sSf | sh brew tap cargo-lambda/cargo-lambda brew install cargo-lambda

new commandFirst, let’s create a new Rust package with the new command. If you include the --http flag, it will automatically generate a project compatible with Lambda function URLs.

cargo lambda new --http rustrocket cd rustrocket

This generates a basic skeleton to start writing AWS Lambda functions with Rust. Our project structure contains the following files:

.

├── Cargo.toml

└── src

└── main.rs

main.rs under the src directory holds our Lambda code written in Rust.

We’ll take a look at that code in a moment, but let’s first take a look at the only other file, Cargo.toml.

Cargo.toml is the Rust manifest file and includes a configuration file written in TOML for your package.

# Cargo.toml

[package]

name = "rustrocket"

version = "0.1.0"

edition = "2021"

[dependencies]

lambda_http = "0.6.1"

lambda_runtime = "0.6.1"

tokio = { version = "1", features = ["macros"] }

tracing = { version = "0.1", features = ["log"] }

tracing-subscriber = { version = "0.3", default-features = false, features = ["fmt"] }

Cargo.toml files defines a package. The name and version fields are the only required pieces of information — an extensive list of additional fields can be found in the official packagedocumentationdependencies, along with the version to installWe’ll only need to add one extra dependency (serde) for the example we’ll create in this article.

Serde is a framework for serializing and deserializing Rust data structures. The current version is 1.0.145 as of the time of writing his article, but you can install the latest version with the following command:

cargo add serde

Now our [dependencies] includes serde = "1.0.145".

watch commandNow, let’s boot up a development server with the watchsubcommand to emulate interactions with the AWS Lambda control plane.

cargo lambda watch

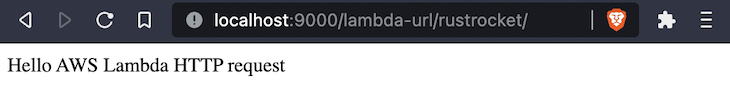

Since the emulator server includes support for Lambda function URLs out of the box, you can open localhost:9000/lambda-url/rustrocket to invoke your function and view it in your browser.

Open main.rs in the src directory to see the code for this function.

// src/main.rs

use lambda_http::{run, service_fn, Body, Error, Request, RequestExt, Response};

async fn function_handler(event: Request) -> Result<Response<Body>, Error> {

let resp = Response::builder()

.status(200)

.header("content-type", "text/html")

.body("Hello AWS Lambda HTTP request".into())

.map_err(Box::new)?;

Ok(resp)

}

#[tokio::main]

async fn main() -> Result<(), Error> {

tracing_subscriber::fmt()

.with_max_level(tracing::Level::INFO)

.with_target(false)

.without_time()

.init();

run(service_fn(function_handler)).await

}

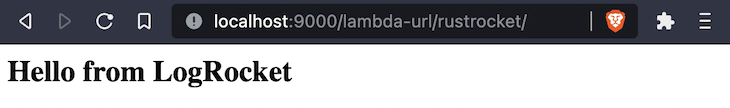

Now, we’ll make a change to the text in the body by including a new message. Since the header is already set to text/html, we can wrap our message in HTML tags.

Our terminal has also displayed a few warnings, which can be fixed with the following changes:

event to _eventRequestExt from imports// src/main.rs

use lambda_http::{run, service_fn, Body, Error, Request, Response};

async fn function_handler(_event: Request) -> Result<Response<Body>, Error> {

let resp = Response::builder()

.status(200)

.header("content-type", "text/html")

.body("<h2>Hello from LogRocket</h2>".into())

.map_err(Box::new)?;

Ok(resp)

}

The main() function will be unaltered. Return to your browser to see the change:

Right now, we have a single function that can be invoked to receive a response, but this Lambda is not able to take specific input from a user and our project cannot be used to deploy and invoke multiple functions.

To do this, we’ll create a new file, hello.rs, inside a new directory called, bin.

mkdir src/bin echo > src/bin/hello.rs

The bin directory gives us the ability to include multiple function handlers within a single project and invoke them individually.

We also have to add a bin section at the bottom of our Cargo.toml file containing the name of our new handler, like so:

# Cargo.toml [[bin]] name = "hello"

Include the following code to transform the event payload into a Response that is passed with Ok() to the main() function, like this:

// src/bin/hello.rs

use lambda_runtime::{service_fn, Error, LambdaEvent};

use serde::{Deserialize, Serialize};

#[derive(Deserialize)]

struct Request {

command: String,

}

#[derive(Serialize)]

struct Response {

req_id: String,

msg: String,

}

pub(crate) async fn my_handler(event: LambdaEvent<Request>) -> Result<Response, Error> {

let command = event.payload.command;

let resp = Response {

req_id: event.context.request_id,

msg: format!("{}", command),

};

Ok(resp)

}

#[tokio::main]

async fn main() -> Result<(), Error> {

tracing_subscriber::fmt()

.with_max_level(tracing::Level::INFO)

.without_time()

.init();

let func = service_fn(my_handler);

lambda_runtime::run(func).await?;

Ok(())

}

invoke commandSend requests to the control plane emulator with the invoke subcommand.

cargo lambda invoke hello \

--data-ascii '{"command": "hello from logrocket"}'

Your terminal will respond back with this:

{

"req_id":"64d4b99a-1775-41d2-afc4-fbdb36c4502c",

"msg":"hello from logrocket"

}

Up to this point, we’ve only been running our Lambda handler locally on our own machines. To get this handler on AWS, we’ll need to build and deploy the project’s artifacts.

build commandCompile your function natively with the buildsubcommand. This produces artifacts that can be uploaded to AWS Lambda.

cargo lambda build

AWS IAM (identity and access management) is a service for creating, applying, and managing roles and permissions on AWS resources.

Its complexity and scope of features has motivated teams such as Amplify to develop a large resource of tools around IAM. This includes libraries and SDKs with new abstractions built for the express purpose of simplifying the developer experience around working with services like IAM and Cognito.

In this example, we only need to create a single, read-only role, which we’ll put in a file called rust-role.json.

echo > rust-role.json

We will use the AWS CLI and send a JSON definition of the following role. Include the following code in rust-role.json:

{

"Version": "2012-10-17",

"Statement": [{

"Effect": "Allow",

"Principal": {

"Service": "lambda.amazonaws.com"

},

"Action": "sts:AssumeRole"

}

]

}

To do this, first install the AWS CLI, which provides an extensive list of commands for working with IAM. The only command we’ll need is the create-role command, along with two options:

--assume-role-policy-document optionrust-role for the --role-name optionaws iam create-role \ --role-name rust-role \ --assume-role-policy-document file://rust-role.json

This will output the following JSON file:

{

"Role": {

"Path": "/",

"RoleName": "rust-role2",

"RoleId": "AROARZ5VR5ZCOYN4Z7TLJ",

"Arn": "arn:aws:iam::124397940292:role/rust-role",

"CreateDate": "2022-09-15T22:15:24+00:00",

"AssumeRolePolicyDocument": {

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Principal": {

"Service": "lambda.amazonaws.com"

},

"Action": "sts:AssumeRole"

}

]

}

}

}

Copy the value for Arn, which in my case is arn:aws:iam::124397940292:role/rust-role, and include it in the next command for the --iam-role flag.

deploy commandUpload your functions to AWS Lambda with the deploy subcommand. Cargo Lambda will try to create an execution role with Lambda’s default service role policy AWSLambdaBasicExecutionRole.

If these commands fail and display permissions errors, then you will need to include the AWS IAM role from the previous section by adding --iam-role FULL_ROLE_ARN.

cargo lambda deploy --enable-function-url rustrocket cargo lambda deploy hello

If everything worked, you will get an Arn and URL for the rustrocket function and an Arn for the hello function, as shown in the image below.

🔍 function arn: arn:aws:lambda:us-east-1:124397940292:function:rustrocket 🔗 function url: https://bxzpdr7e3cvanutvreecyvlvfu0grvsk.lambda-url.us-east-1.on.aws/

Open the function URL to see your function:

For the hello handler, we’ll use the invoke command again, but this time with a --remote flag for remote functions.

cargo lambda invoke \

--remote \

--data-ascii '{"command": "hello from logrocket"}' \

arn:aws:lambda:us-east-1:124397940292:function:hello

Up to this point, we’ve only used Cargo Lambda to work with our Lambda functions, but there are at least a dozen different ways to deploy functions to AWS Lambda!

These numerous methods vary widely in approach, but can be roughly categorized into one of three groups:

For instructions on using tools from the second and third category, see the section called Deploying the Binary to AWS Lambda on the aws-lambda-rust-runtime GitHub repository.

The future of Rust is bright at AWS. They are heavily investing in the team and foundation supporting the project. There are also various AWS services that are now starting to incorporate Rust, including:

To learn more about running Rust on AWS in general, check out the AWS SDK for Rust. To go deeper into using Rust on Lambda, visit the Rust Runtime repository on GitHub.

I hope this article served as a useful introduction on how to run Rust on Lambda, please leave your comments below on your own experiences!

Debugging Rust applications can be difficult, especially when users experience issues that are hard to reproduce. If you’re interested in monitoring and tracking the performance of your Rust apps, automatically surfacing errors, and tracking slow network requests and load time, try LogRocket.

LogRocket lets you replay user sessions, eliminating guesswork around why bugs happen by showing exactly what users experienced. It captures console logs, errors, network requests, and pixel-perfect DOM recordings — compatible with all frameworks.

LogRocket's Galileo AI watches sessions for you, instantly identifying and explaining user struggles with automated monitoring of your entire product experience.

Modernize how you debug your Rust apps — start monitoring for free.

Claude Code vs. OpenCode in a real Next.js refactor: benchmark results, mistakes, prompts, and when to use each CLI agent.

Every time you explain your team’s coding standards to Claude, you are doing work that should be reusable. The same […]

Learn how to move beyond one-shot prompting in Claude with structured workflows for AI-assisted coding, debugging, PR reviews, documentation, testing, and automation.

Learn how to build advanced Next.js forms with rule engines, client-side previews, Server Actions, and server-validated form logic.

Hey there, want to help make our blog better?

Join LogRocket’s Content Advisory Board. You’ll help inform the type of content we create and get access to exclusive meetups, social accreditation, and swag.

Sign up now