Editor’s note: This article was last updated by Muhammed Ali on 31 July 2024 to cover advanced parsing techniques and the issue of memory management when converting CSV files.

Comma-separated values, also known as CSVs, are one of the most common and basic formats for storing and exchanging tabular datasets for statistical analysis tools and spreadsheet applications. Due to their popularity, it is not uncommon for governing bodies and other important organizations to share official datasets in CSV format.

Despite their simplicity, popularity, and widespread use, it is common for CSV files created using one application to display incorrectly in another. This is because there is no official universal CSV specification to which applications must adhere. As a result, several implementations exist with slight variations.

Most modern operating systems and programming languages, including JavaScript and the Node.js runtime environment, have applications and packages for reading, writing, and parsing CSV files.

In this article, we will learn how to manage CSV files in Node. We shall also highlight the slight variations in the different CSV implementations. Some popular packages we will look at include csv-parser, Papa Parse, and Fast-CSV. We will follow the unofficial RFC 4180 technical standard, which attempts to document the format used by most CSV implementations.

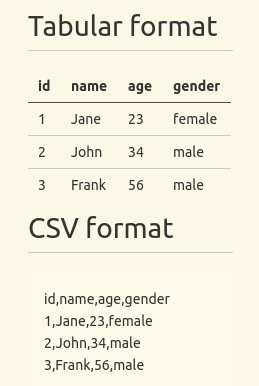

CSV files are ordinary text files comprised of data arranged in rectangular form. When you save a tabular dataset in CSV format, a new line character will separate successive rows while a comma will separate consecutive entries in a row. The image below shows a tabular dataset and its corresponding CSV format:

In the above CSV data, the first row is made up of field names, though that may not always be the case. Therefore, it is necessary to investigate your dataset before you start to read and parse it.

Although the name “comma-separated values” seems to suggest that a comma should always separate subsequent entries in each record, some applications generate CSV files that use a semicolon as a delimiter instead of a comma.

As already mentioned, there is no official universal standard to which CSV implementations should adhere, even though the unofficial RFC 4180 technical standard exists. However, this standard came into existence many years after the CSV format gained popularity.

In the previous section, we had a brief introduction to CSV files. In this section, you will learn how to read, write, and parse CSV files in Node using both built-in and third-party packages.

fs moduleThe fs module is the de facto module for working with files in Node. The code below uses the readFile function of the fs module to read from a data.csv file:

const fs = require("fs");

fs.readFile("data.csv", "utf-8", (err, data) => {

if (err) console.log(err);

else console.log(data);

});

The corresponding example below writes to a CSV file using the writeFile function of the fs module:

const fs = require("fs");

const data = `

id,name,age

1,Johny,45

2,Mary,20

`;

fs.writeFile("data.csv", data, "utf-8", (err) => {

if (err) console.log(err);

else console.log("Data saved");

});

If you are not familiar with reading and writing files in Node, check out my complete tutorial to reading and writing JSON files in Node.

If you use fs.readFile, fs.writeFile, or its synchronous counterpart like in the above examples, Node will read the entire file into memory before processing it. With the createReadStream and createWriteStream functions of the fs module, you can use streams instead to reduce memory footprint and data processing time.

The example below uses the createReadStream function to read from a data.csv file:

const fs = require("fs");

fs.createReadStream("data.csv", { encoding: "utf-8" })

.on("data", (chunk) => {

console.log(chunk);

})

.on("error", (error) => {

console.log(error);

});

Additionally, most of the third-party packages we will look at in the following subsections also use streams.

This is a relatively tiny third-party package you can install from the npm package registry. It is capable of parsing and converting CSV files to JSON.

The code below illustrates how to read data from a CSV file and convert it to JSON using csv-parser. We are creating a readable stream using the createReadStream method of the fs module and piping it to the return value of csv-parser():

const fs = require("fs");

const csvParser = require("csv-parser");

const result = [];

fs.createReadStream("./data.csv")

.pipe(csvParser())

.on("data", (data) => {

result.push(data);

})

.on("end", () => {

console.log(result);

});

There is an optional configuration object that you can pass to csv-parser. By default, csv-parser treats the first row of your dataset as field names(headers).

If your dataset doesn’t have headers, or successive data points are not comma-delimited, you can pass the information using the optional configuration object. The object has additional configuration keys you can read about in the documentation.

In the example above, we read the CSV data from a file. You can also fetch the data from a server using an HTTP client like Axios or Needle. The code below illustrates how to do so:

const csvParser = require("csv-parser");

const needle = require("needle");

const result = [];

const url = "https://people.sc.fsu.edu/~jburkardt/data/csv/deniro.csv";

needle

.get(url)

.pipe(csvParser())

.on("data", (data) => {

result.push(data);

})

.on("done", (err) => {

if (err) console.log("An error has occurred");

else console.log(result);

});

You need to first install Needle before executing the code above. The get request method returns a stream that you can pipe to csv-parser(). You can also use another package if Needle isn’t for you.

The above examples highlight just a tiny fraction of what csv-parser can do. As already mentioned, the implementation of one CSV document may be different from another. csv-parser has built-in functionalities for handling some of these differences.

Though csv-parser was created to work with Node, you can use it in the browser with tools such as Browserify.

Papa Parse is another package for parsing CSV files in Node. Unlike csv-parser, which works out of the box with Node, Papa Parse was created for the browser. Therefore, it has limited functionalities if you intend to use it in Node.

We illustrate how to use Papa Parse to parse CSV files in the example below. As before, we have to use the createReadStream method of the fs module to create a read stream, which we then pipe to the return value of papaparse.parse().

The papaparse.parse function you use for parsing takes an optional second argument. In the example below, we pass the second argument with the header property. If the value of the header property is true, Papa Parse will treat the first row in our CSV file as column(field) names.

The object has other fields that you can look up in the documentation. Unfortunately, some properties are still limited to the browser and not yet available in Node:

const fs = require("fs");

const Papa = require("papaparse");

const results = [];

const options = { header: true };

fs.createReadStream("data.csv")

.pipe(Papa.parse(Papa.NODE_STREAM_INPUT, options))

.on("data", (data) => {

results.push(data);

})

.on("end", () => {

console.log(results);

});

Similarly, you can also fetch the CSV dataset as readable streams from a remote server using an HTTP client like Axios or Needle and pipe it to the return value of papa-parse.parse() like before.

In the example below, I illustrate how to use Needle to fetch data from a server. It is worth noting that making a network request with one of the HTTP methods like needle.get returns a readable stream:

const needle = require("needle");

const Papa = require("papaparse");

const results = [];

const options = { header: true };

const csvDatasetUrl = "https://people.sc.fsu.edu/~jburkardt/data/csv/deniro.csv";

needle

.get(csvDatasetUrl)

.pipe(Papa.parse(Papa.NODE_STREAM_INPUT, options))

.on("data", (data) => {

results.push(data);

})

.on("end", () => {

console.log(results);

});

Using the Fast-CSV package

Fast-CSV is a flexible third-party package for parsing and formatting CSV datasets that combines @fast-csv/format and @fast-csv/parse packages into a single package. You can use @fast-csv/format and @fast-csv/parse for formatting and parsing CSV datasets, respectively.

The example below illustrates how to read a CSV file and parse it to JSON using Fast-CSV:

const fs = require("fs");

const fastCsv = require("fast-csv");

const options = {

objectMode: true,

delimiter: ";",

quote: null,

headers: true,

renameHeaders: false,

};

const data = [];

fs.createReadStream("data.csv")

.pipe(fastCsv.parse(options))

.on("error", (error) => {

console.log(error);

})

.on("data", (row) => {

data.push(row);

})

.on("end", (rowCount) => {

console.log(rowCount);

console.log(data);

});

Above, we are passing the optional argument to the fast-csv.parse function. The options object is primarily for handling the variations between CSV files. If you don’t pass it, csv-parser will use the default values. For this illustration, I am using the default values for most options.

In most CSV datasets, the first row contains the column headers. By default, Fast-CSV considers the first row to be a data record. You need to set the headers option to true, like in the above example, if the first row in your dataset contains the column headers.

Similarly, as we mentioned in the opening section, some CSV files may not be comma-delimited. You can change the default delimiter using the delimiter option as we did in the example above.

Instead of piping the readable stream as we did in the previous example, we can also pass it as an argument to the parseStream function as shown here:

const fs = require("fs");

const fastCsv = require("fast-csv");

const options = {

objectMode: true,

delimiter: ",",

quote: null,

headers: true,

renameHeaders: false,

};

const data = [];

const readableStream = fs.createReadStream("data.csv");

fastCsv

.parseStream(readableStream, options)

.on("error", (error) => {

console.log(error);

})

.on("data", (row) => {

data.push(row);

})

.on("end", (rowCount) => {

console.log(rowCount);

console.log(data);

});

The functions above are the primary functions you can use for parsing CSV files with Fast-CSV. You can also use the parseFile and parseString functions, but we won’t cover them here. For more about them, I recommend the official documentation.

When working with more complex CSV files, such as those with nested quotes or multiple delimiters, it is especially important to configure your CSV parser correctly.

For example, when dealing with fields that contain commas or other delimiters as part of the data, enclosing these fields in quotes is common. However, if your CSV file has inconsistent quoting or nested quotes, parsing can become tricky. Here’s how you can handle these scenarios:

const options = {

headers: true,

quote: '"',

escape: '"',

delimiter: ',',

};

fs.createReadStream("complex_data.csv")

.pipe(csvParser(options))

.on("data", (data) => {

console.log(data);

})

.on("error", (error) => {

console.log(error);

});

In the example above, the quote and escape options are set to handle nested quotes. Adjust these settings based on the specific structure of your CSV file.

CSV files may not always include headers. If you encounter a headerless CSV, you can dynamically assign headers based on the data or handle it differently depending on your needs.

Here’s how to dynamically add headers:

const options = {

headers: ["Column1", "Column2", "Column3"],

skipLines: 0, // In case you need to skip rows

};

fs.createReadStream("headerless_data.csv")

.pipe(csvParser(options))

.on("data", (data) => {

console.log(data);

})

.on("error", (error) => {

console.log(error);

});

In the above example, we manually specify headers for the CSV file, which will be applied during parsing.

A common issue that occurs when dealing with large CSV files is that converting CSV to a string can cause memory issues, leading to memory leaks or crashes. This typically happens because reading the entire file into memory at once can exhaust available memory, especially with very large datasets.

To avoid this, you should stream the CSV file line by line, processing each chunk individually. This method not only helps manage memory more efficiently but also speeds up the data processing time.

Here’s how to handle large files with streaming:

const results = [];

fs.createReadStream("large_data.csv")

.pipe(csvParser())

.on("data", (data) => {

results.push(data);

})

.on("end", () => {

console.log("CSV file successfully processed");

})

.on("error", (error) => {

console.log(error);

});

Best practices for memory management include:

The comma-separated values format is one of the most popular formats for data exchange. CSV datasets consist of simple text files readable to both humans and machines. But despite its popularity, there is no universal standard.

The unofficial RFC 4180 technical standard attempts to standardize the format, but some subtle differences exist among the different CSV implementations. These differences exist because the CSV format started before the RFC 4180 technical standard came into existence. Therefore, it is common for CSV datasets generated by one application to display incorrectly in another application.

You can use the built-in functionalities or third-party packages for reading, writing, and parsing simple CSV datasets in Node. Most of the CSV packages we looked at are flexible enough to handle the subtle differences resulting from the different CSV implementations.

Monitor failed and slow network requests in production

Monitor failed and slow network requests in productionDeploying a Node-based web app or website is the easy part. Making sure your Node instance continues to serve resources to your app is where things get tougher. If you’re interested in ensuring requests to the backend or third-party services are successful, try LogRocket.

LogRocket lets you replay user sessions, eliminating guesswork around why bugs happen by showing exactly what users experienced. It captures console logs, errors, network requests, and pixel-perfect DOM recordings — compatible with all frameworks.

LogRocket's Galileo AI watches sessions for you, instantly identifying and explaining user struggles with automated monitoring of your entire product experience.

LogRocket instruments your app to record baseline performance timings such as page load time, time to first byte, slow network requests, and also logs Redux, NgRx, and Vuex actions/state. Start monitoring for free.

Hey there, want to help make our blog better?

Join LogRocket’s Content Advisory Board. You’ll help inform the type of content we create and get access to exclusive meetups, social accreditation, and swag.

Sign up now

This guide walks you through creating a web UI for an AI agent that browses, clicks, and extracts info from websites powered by Stagehand and Gemini.

This guide explores how to use Anthropic’s Claude 4 models, including Opus 4 and Sonnet 4, to build AI-powered applications.

Which AI frontend dev tool reigns supreme in July 2025? Check out our power rankings and use our interactive comparison tool to find out.

Learn how OpenAPI can automate API client generation to save time, reduce bugs, and streamline how your frontend app talks to backend APIs.

2 Replies to "A complete guide to CSV files in Node.js"

“`

.on(“done”, (err) => {

“`

I think you need to change this line to “end” event

Hi Hughie,

Joseph here. Thanks for pointing this out.

The “done” event is emitted by needle not csv-parser. This is what the needle documentation says.

“The done” event is emitted when the request/response process has finished, either because all data was consumed or an error occurred somewhere in between”. There is a similar example in the docs. https://github.com/tomas/needle#event-done-previously-end

However, using it the way I did is confusing given that the article is about “Using csv-parser to read and parse CSV files”. Someone might opt for a different HTTP client instead of needle. It is good to stick to the “end” event as you suggested to avoid confusion.

Thanks again. Let me know if there is anything else I might have missed.