In today’s world, working with data has become one of the core ingredients of any user-facing application. While it may seem insignificant at the start, handling it well is not so trivial when you’re working on the scale of thousands of daily active users.

Kafka helps you build durable, fault-tolerant, and scalable data pipelines. Moreover, it has been adopted by applications like Twitter, LinkedIn, and Netflix.

In this article, we will understand what Kafka pub-sub is, how it helps, and how to start using it in your Node.js API.

Jump ahead:

The Replay is a weekly newsletter for dev and engineering leaders.

Delivered once a week, it's your curated guide to the most important conversations around frontend dev, emerging AI tools, and the state of modern software.

Pub-sub is a way to decouple the two ends of a connection and communicate asynchronously. This setup consists of publishers (pub) and subscribers (sub), where publishers broadcast events, instead of targeting a particular subscriber in a synchronous, or blocking, fashion. The subscribers then consume events from the publishers.

With this mechanism, a subscriber is no longer tied to a given producer and can consume and process events at its own pace. It promotes scalability because a single broadcast event can be consumed by multiple subscribers. It is a big leap forward from a mere HTTP request.

Contrary to this, the HTTP request-response cycle leaves the requester waiting for the response and effectively blocks them from doing anything else. This is a big reason for moving to an event-based architecture in today’s microservice-based applications environment.

Kafka offers three main capabilities:

Kafka ensures that any published event can be consumed by multiple consumers, and that those events won’t be deleted or removed from the storage once consumed. It allows you to consume the same event multiple times, so data durability is top-notch.

Now that we know what Kafka provides, let’s look at how it works.

A fresh, self-hosted or managed Kafka cluster mostly contains brokers. A Kafka broker is a computer or cloud instance running the Kafka broker process. It manages a subset of partitions and handles incoming requests to write new events to those partitions or read them. Writes usually only happen to the instances running the leader; other instances follow through replication. For a given topic and its partitions spread across multiple brokers, Kafka seamlessly elects a new leader from the followers if a leader broker dies.

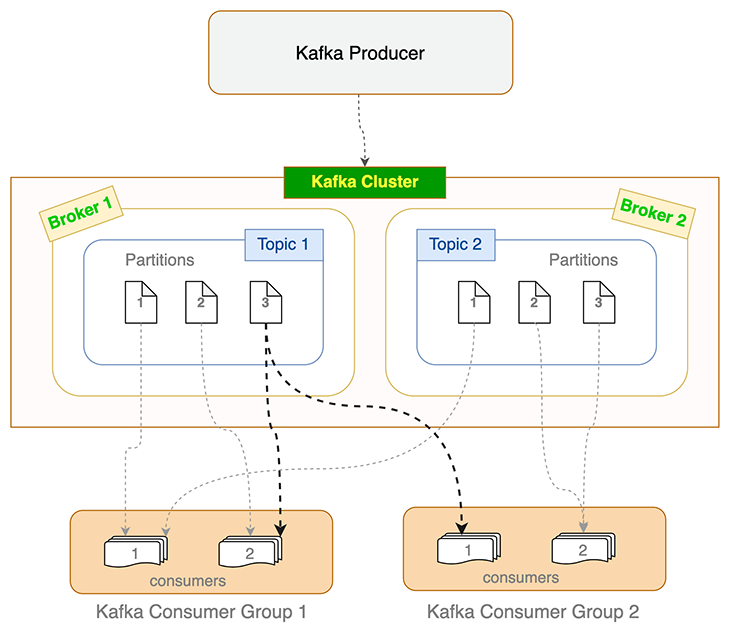

Producers and consumers use Kafka broker(s) to write events and read from Kafka topics. Topics are further divided into partitions. To keep track of how many events are processed (and committed), Kafka maintains an offset value, which is nothing but an incrementing number that reflects the number of events processed.

When a consumer dies or becomes unresponsive (i.e., failing to send heartbeats to the broker within the sessionTimeout ms set), Kafka reassigns those orphaned partitions to the remaining consumers. Similar load balancing happens when a new consumer joins the consumer group. This process of reassigning and reevaluating load is called rebalancing. When Kafka consumers rebalance, they start consumption from the latest un-committed offset.

A topic’s partition can only be consumed by a single consumer in a consumer group. But multiple consumers from different consumer groups can each consume from the same partition. This is depicted in the flowchart above for Partition 3 of Topic 1.

We will use a barebones Express.js API for this tutorial. You can find the starter code here. We will use it as a base and add Kafka support to the API. We can do more with the data, but the API is intentionally kept simple so that we can focus on Kafka.

If you want to follow along, run the API locally on your machine. Here are the steps:

git clone [email protected]:Rishabh570/kafka-nodejs-starter.git

git checkout starternpm installnpm startNow that the API is running locally on your machine, let’s install Kafka.

Before we start producing and consuming events, we need to install Kafka to our API. We will use the Kafka.js client library for Node.js.

Run this command to install it:

npm install kafkajs

Next, install the Kafka CLI tool. It helps with administrative tasks and experimenting with Kafka. Simply head over to kafka.apache.org and download Kafka.

We are now officially ready to dive into the interesting stuff. Let’s create a Kafka topic to start producing events.

In Kafka, you need a topic to send events. A Kafka topic can be understood as a folder; likewise, the events in that topic are the files in that folder. Events sent on channelA will stay isolated from the events in channelB. Kafka topics allow isolation between multiple channels.

Let’s create a topic using the Kafka CLI we downloaded in the previous step:

bin/kafka-topics.sh --create --topic demoTopic --bootstrap-server localhost:9092

We used the kafka-topics.sh script to create the Kafka topic. When running the above command, ensure you’re in the folder where you downloaded the Kafka CLI tool.

We have created a topic named demoTopic. You can name it anything; I’d recommend following a naming convention when creating topics. For an ecommerce application, the nomenclature for Kafka topics to notify users who wishlisted an item can be like this:

macbook14_wishlisted_us_east_1_appmacbook14_wishlisted_us_east_2_appmacbook14_wishlisted_us_east_1_webmacbook14_wishlisted_us_east_2_webAs you might have noticed, it leverages item and user properties to assign topic names. Not only does this offload a major responsibility from your shoulders, it immediately tells you what kind of events the topic holds. Of course, you can make the names even more specific and granular based on your specific project.

To send events, we need one more thing: brokers.

As we learned earlier, brokers are responsible for writing events and reading them from topics. We’ll make sure it is setup before our code runs.

This is what our index.js file looks like:

const { Kafka } = require('kafkajs')

const kafka = new Kafka({

clientId: 'kafka-nodejs-starter',

brokers: ['kafka1:9092'],

});

Before the API uses the routes to produce or consume events, it connects to the Kafka client and creates two brokers. That’s it.

Let’s move on to actually producing events now.

We have successfully installed Kafka on our Node.js application. It’s time to write our first Kafka producer and learn how it works.

const producer = kafka.producer()

// Connect to the producer

await producer.connect()

// Send an event to the demoTopic topic

await producer.send({

topic: 'demoTopic,

messages: [

{ value: 'Hello micro-services world!' },

],

});

// Disconnect the producer once we're done

await producer.disconnect();

We address three steps with the above code:

This is a straightforward example. There are a few configuration settings that the client library provides that you might want to tweak as per your needs. These include:

We have written our Kafka producer and it is ready to send events on the demoTopic topic. Let’s build a Kafka consumer that will listen to the same topic and log it into the console.

const consumer = kafka.consumer({ groupId: 'test-group' })

await consumer.connect()

await consumer.subscribe({ topic: 'demoTopic', fromBeginning: true })

await consumer.run({

eachMessage: async ({ topic, partition, message }) => {

console.log({

value: message.value.toString(),

})

},

});

Here’s what is happening in the above code snippet:

demoTopicThe Kafka consumer provides many options and a lot of flexibility in terms of allowing you to determine how you want to consume data. Here are some settings you should know:

Committing in Kafka means saving the message/event to disk after processing. As we know, offset is just a number that keeps track of the messages/events processed.

Committing often makes sure that we don’t waste resources processing the same events again if a rebalance happens. But it also increases the network traffic and slows down the processing.

This tells Kafka the maximum amount of bytes to be accumulated in the response. This setting can be used to limit the size of messages fetched from Kafka and avoids overwhelming your application.

Kafka consumes from the latest offset by default. If you want to consume from the beginning of the topic, use the fromBeginning flag.

await consumer.subscribe({ topics: ['demoTopic], fromBeginning: true })

You can choose to consume events from a particular offset as well. Simply pass the offset number you want to start consuming from.

// This will now only resolve the previous offset, not commit it

consumer.seek({ topic: 'example', partition: 0, offset: "12384" })

The client also provides a neat pause and resume functionality on the consumer. This allows you to build your own custom throttling mechanism.

There are a lot of other options to explore and you can configure them according to your needs. You can find all the consumer settings here.

HTTP has its valid use cases, but it is easy to overdo it. There are scenarios where HTTP is not suitable. For example, you can inject events into a Kafka topic named order_invoices to send them to the customers who requested them during their purchase.

This is a better approach compared to sending the events over HTTP because:

If you have idempotency requirements, I’d recommend having an idempotency layer in your services as well. With an idempotency layer, you can replay all Kafka events, as well as the events between two given timestamps.

How do you filter out only specific ones between a given period of time? If you take a closer look, Kafka does not (and should not) solve this problem.

This is where the application-level idempotency layer comes into play. If you replay all of your events between specific timestamps, the idempotency mechanism in your services makes sure to only cater to the first-seen events.

A simple idempotency solution can be to pick a unique and constant parameter from the request (say, order ID in our invoices example) and create a Redis key using that. So, something like the below should work for starters:

notification:invoices:<order_id>

This way, even if you replay events, your application won’t process the same order again for sending out the notification for the invoice. Moreover, if you want this Redis key to be relevant for only a day, you can set the TTL accordingly.

Kafka pub-sub can be used in a variety of places:

Twitter uses Kafka as its primary pub-sub pipeline. LinkedIn uses it to process more than 7 trillion messages per day, and Kafka event streams are used for all point-to-point and across the Netflix studio communications due to its high durability, linear scalability, and fault tolerance.

It wouldn’t be wrong to say that Kafka is one of the most important cornerstones for scalable services, satisfying millions of requests.

We have looked at what Kafka pub-sub is, how it works, and one of the ways you can leverage it in your Node.js application.

Now, you have all the required tools and knowledge to embark on your Kafka journey. You can get the complete code from this kafka-node-starter repository, in case you weren’t able to follow along. Just clone the repository and follow the steps shown in this guide. You will definitely have a better understanding when you follow this hands-on.

For any queries and feedback, feel free to reach out in the comments.

Monitor failed and slow network requests in production

Monitor failed and slow network requests in productionDeploying a Node-based web app or website is the easy part. Making sure your Node instance continues to serve resources to your app is where things get tougher. If you’re interested in ensuring requests to the backend or third-party services are successful, try LogRocket.

LogRocket lets you replay user sessions, eliminating guesswork around why bugs happen by showing exactly what users experienced. It captures console logs, errors, network requests, and pixel-perfect DOM recordings — compatible with all frameworks.

LogRocket's Galileo AI watches sessions for you, instantly identifying and explaining user struggles with automated monitoring of your entire product experience.

LogRocket instruments your app to record baseline performance timings such as page load time, time to first byte, slow network requests, and also logs Redux, NgRx, and Vuex actions/state. Start monitoring for free.

Learn how to test Nuxt apps with Vitest, @nuxt/test-utils, runtime mocks, server route mocks, and Playwright e2e tests.

I had four weeks to build a complete app from scratch using AI tools like OpenCode and Claude Opus: here’s how it went.

Learn how to build a reusable Vue 3 table engine that powers tables, cards, and lists with shared sorting and pagination logic.

Compare the best React chart libraries for 2026, including Recharts, Nivo, visx, Apache ECharts, MUI X Charts, and more.

Would you be interested in joining LogRocket's developer community?

Join LogRocket’s Content Advisory Board. You’ll help inform the type of content we create and get access to exclusive meetups, social accreditation, and swag.

Sign up now