When I got introduced to programming, years ago, I was really excited about the endless opportunities I could embrace.

As I developed sites back then, I recall they didn’t do as much as the web “applications” I build today.

These days, the web does more, with features that exceed previous expectations.

And it doesn’t even end there.

Most web applications are now used on mobile devices with slow, unreliable connections — and accessible from across any country in the world. From Nigeria to India.

As someone who lives in Nigeria, a third world country, and builds products for her people, making sure the apps I build are fast isn’t a luxury. It isn’t some technical fantasy. It’s a reality I live with every single day.

Right now, I no longer think only about building web pages, I concern myself with how to make them fast as well.

Do you?

What a burden, huh?

You see, the story I shared isn’t just mine to tell. Most web developers will tell you the same.

Most people start off their career with building applications that just work, then later they begin to get concerned with how to make them fast.

This sort of concern birthed the field of web performance.

Web performance is such a big deal. In fact, the performance of your site can be the difference between remaining in business or losing money.

So, how do you build web applications that remain consistently fast?

There’s an answer for that!

In this article, I’ll offer tips you can use right away to make any website faster. Regardless of the technology upon which it is built, there are universal principles for making any website fast.

Disclaimer: You do not have to apply every technique I discuss here. Whichever you choose to apply will definitely improve your site’s speed — that’s a given. Also, web performance is a broad field — I couldn’t possibly explain every technique out there. But, I believe I’ve done a good job of distilling them.

If you’re ready to dig in, so am I!

All the techniques in this article are explained in plain, easy to read language. However, to appeal to engineers of various skill levels, I have broken the techniques down into three distinct sections — beginner, intermediate, and advanced techniques.

You’ll find the corresponding headers below.

If you’re new to web performance or have had a difficult time really understanding how web performance works, you should definitely start from the first group of techniques.

For intermediate and advanced developers, I’ve got some interesting considerations for you. Feel free to skim through techniques you’re familiar with.

It’s not a problem to be new to the art of improving your site’s performance. In fact, everyone began as a beginner!

Regardless of your skill level, there are simple tips you can try out right away — with significant effect of your site’s loading speed.

First, let me explain how you should think of web performance.

To make your web apps faster you have to understand the constant “conversation” that occurs every single time a user visits your website.

Whenever a user visits your website, they request certain assets from your server e.g. the HTML, CSS and JS files for your web application.

You’re like a chef with a lot of hungry people to be served.

As a chef, you must be concerned with how much food you’re serving to each person. If you serve too much, their plate will get full and overflow.

You also have to be careful how you’re serving the food. If you do it incorrectly, you’ll spill food all over the place.

Finally, you have to be concerned with what food you’re serving. Is the food well spiced? Too much salt?

To be anything near successful at building a performant web application, you have to learn to be a good chef.

You must concern yourself with how much assets you’re sending to the user, how you’re sending these assets, and how well these assets have been “cooked”.

If that sounded vague, It’s really simple. Let’s start by learning techniques to reduce how much assets you’re sending down to the user.

Most applications are bloated because there’s so much “useless” code in there. These are more appropriately termed unnecessary resources. For example, you might not need all of jQuery just because you want to query the DOM. Remove jQuery, and use browser specific APIs such as document.querySelector

Another good example is if you don’t really need Bootstrap, then don’t have it in there. CSS itself is a render blocking resource, and the bootstrap modules will have you downloading lots of CSS that you may end up not using. Embrace Flexbox and CSS Grid for layouts. Use good old CSS where you can too.

The questions to ask yourself are:

(i) Does the resource really provide so much value? e.g. I don’t use Bootstrap all the time, even though I wrote an exhaustive guide on the subject.

(ii) Can I use the same resource but strip it down to the exact modules I’m consuming? For example, instead of importing the entire Lodash package, you could import a subset.

(iii) Can I replace the resource all together? e.g. Just remove JQuery and use the browser specific APIs for querying the DOM.

The questions may go on and on, but the premise remains the same. Make a list of your web app’s resources, determine if they provide enough value and be painfully honest about how it affects the performance of your site.

Even after eliminating unnecessary resources in your application, there’ll be certain resources you can’t do without. A good example is some of the text content of your app, namely, HTML, CSS and JS.

Eliminating all HTML, CSS and JS on your site will make your website nonexistent. That’s not the route to go through. There’s still something we can do though.

Consider the simple HTML document shown below:

<!DOCTYPE html>

<html lang="en">

<head>

<!-- comment: this is some important meta stuff here -->

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<meta http-equiv="X-UA-Compatible" content="ie=edge">

<title>Document</title>

</head>

<body>

<!-- Note to self 3 weeks from now: Here's the body -->

</body>

</html>This document contains comments, valid HTML characters, proper indentation and spacing to accommodate for readability.

Compare the document above with the minified version below:

<!DOCTYPE html><html lang="en"><head> <meta charset="UTF-8"> <meta name="viewport" content="width=device-width, initial-scale=1.0"> <meta http-equiv="X-UA-Compatible" content="ie=edge"> <title>Document</title></head><body> </body></html>As you may have noticed, the minified version of the document has comments and spacing stripped out. It doesn’t look readable to you, but the computer can read and understand this.

In easy terms, minification means removing whitespace and unnecessary characters from text without changing its purpose.

As a rule of thumb, your production apps should always have their HTML, CSS and JS files minified. All of them.

In this contrived example, the minified HTML document was 263 Bytes, and the unminified version, 367 Bytes. That’s a 28% reduction in file size!

You can imagine the effect of this across a large project with many different files — HTML, CSS and JS.

For this example, I used a simple web based minifier. However, that is a tedious process for a sufficiently large app. So, could this process be automated?

Yeah! definitely.

If you’ve ever built an app with React or Vue ( or any other modern frontend framework actually ), you likely have a build release cycle built into their configuration/setup tools such as create-react-app and the vue-cli.

These tools will automatically handle your file minification for you. If you’re setting up a new project from scratch, you should look into using a modern build tool such as Webpack or Parcel.

Every character in your HTML, CSS or JS has to downloaded from the server to the browser, and this isn’t a trivial task. By minifying your HTML, CSS and JS you’ve reduced the overhead cost of downloading these resources.

That’s what a good chef would do.

You’ve done a great job by minifying your text content in your web app (HTML, CSS, and JS)

After minifying your resources, let’s assume you go on the deploy the app to some server.

When a user visits your app, they request these resources from the server, the server responds, and a download to the browser begins.

What if in this entire process, the server could perform some more compressions before sending the resources to the client?

This is where Gzip comes in.

Weird name, huh?

The first time I heard the word Gzip, it seemed like everyone knew what it meant except me.

In very simple terms, Gzip refers to a data compression program originally written for the GNU project. The same way you could minify assets via an online program or a modern bundle such as Wepback or Rollup, Gzip also represents a data compression program.

What’s worth noting is that even a minified file can be further compressed via Gzip. It’s important to remember that.

If you want to see for yourself, copy, paste and save the following to a file named small.html

<!DOCTYPE html><html lang="en"><head> <meta charset="UTF-8"> <meta name="viewport" content="width=device-width, initial-scale=1.0"> <meta http-equiv="X-UA-Compatible" content="ie=edge"> <title>Document</title></head><body> </body></html>This represents the minified HTML document we’ve been working with.

Open up your terminal, change working directory into where the small.html document resides, then run the following:

gzip small.htmlThe gzip program Is automatically installed on your computer if you’re on a Mac.

If you did that correctly, you’ll now have a compressed file named, small.html.gz

If you’re curious how much data we saved by further compressing via Gzip, run the command:

ls -lh small.html.gzThis will show the details of the file, including its size.

This file is now sized 201 Bytes!

Let me put that in perspective. We’ve gone from 367 Bytes to 263 Bytes and now 201 Bytes.

That’s another 24% reduction from an already minified file. In fact, if you take into consideration the original size of the file (before minification), we’ve arrived at over 45% reduction in size.

For larger files, gzip can achieve up to 70% compression!

The next logical question is, how do I set up a gzip compression on the server?

If you work as a frontend engineer, upon deploying your frontend application, you could go ahead and set up a simple node/express server that serves your client files.

For an express app, using gzip compression comes in just 2 lines of code:

const compression = require('compression');

const app = express();

app.use(compression());For what it’s worth, the compression module is a package from the same team behind express.

Regardless of your server set up, a simple google search for “how to compress via gzip on a XXX server” will lead you in the right direction.

It is worth mentioning that not all resources are worth being gzipped. You’ll get the best results with text content such as HTML, CSS and JS documents.

If the user is on a modern browser, the browser will automatically decompress the gzipped file after downloading it. So, you don’t have to worry about that. I found this SO answer enlightening if you want to check that out.

Remember, it is great to have your text contents minified and further compressed on the server via Gzip. Got that?

The problem with images is that they take up a lot of visual space. If an image is broken, or doesn’t load quickly, it’s usually pretty obvious. Not to mention the fact that images also account for most downloaded bytes on a web page. It’s almost a crime not to pay attention to image optimisations.

So , what can do you to optimise your images?

If the effect you seek can be achieved by using a CSS effect such as gradients and shadows, you should definitely consider NOT using an image for such effect. You can get the same thing done in CSS for a fraction of the bytes required by an image file.

This one can be tricky, but you’ll get used to making quicker decisions with time. If you need an illustration, geometry shapes etc. you should, by all means, choose an SVG. For everything else, a Raster graphics is your best bet.

Even with raster graphics comes many different flavours. GIF, PNG, JPEG, WebP?

Choose PNG if you need the transparency it offers, otherwise, most JPEGs of the same image file tend to be smaller than their PNG counterpart. Well, you know when you need GIFs, but there’s a catch as you’ll see below.

I’ve built sites where it was a lot easier to just put in a GIF for animated content and small screencasts. The problem, despite its convenience is that GIFs are mostly larger in size that their video counterpart. Sometimes a lot larger!

This is NOT an iron-clad rule that’s always true.

For example, below’s a screencast I once made:

The GIF is 2.2mb in size. However exporting the screencast to a video yields a file of size of the same size, 2.2mb!

Depending on the quality, frame rate and length of the GIF, you do have to test things out for yourself.

For converting GIF to video, you can use an online converter or go geeky with a CLI solution.

Remember that images take up a lot of internet bandwidth — largely because of their file size. Image compression could take another 15 minutes to really explain and I doubt you’ll wait long enough for that.

The image guide from Addy Osmani is a great resource. However, If you don’t want to think too hard about it, you can use an online tool such as TinyPNG for compressing your raster images. For SVGs, consider using SVGO from the command line, or the web interface, SVGOMG from Jake Archibald.

These tools will retain the quality of your images but reduce their sizes considerably!

If you serve the same super large image for both computers and smaller devices, that’s a performance leak right there! An easier to understand example is loading a big image from the server for a thumbnail. That makes little or no sense at all.

In most cases, you can avoid this by using the HTML image srcset and sizes attributes.

First, let me show you the problem we’re trying to solve. The default usage of the img element is this:

<img src="cute-kitten-800w.jpg" alt="A pretty cute kitten"/>The img tag is provided with src and alt attributes. The src points to a single image of width 800px. By implication, both mobile devices and larger screens will use this same large image. You’ll agree that’s not the most performant solution.

However, consider the following:

<img srcset="cute-kitten-320w.jpg 320w,

cute-kitten-480w.jpg 480w,

cute-kitten-800w.jpg 800w"

sizes="(max-width: 320px) 280px

(max-width: 480px) 440px

800px"

src="cute-kitten-800w.jpg" alt="A pretty cute kitten" />Can you make sense of that?

Well, srcset is like a bucket of image size options made available to the browser. The srcset value is a string with comma separated values.

sizes is the attribute that determines which image in the bucket of options is assigned to what devices size.

For example, the initial declaration in the sizes value reads, If device width is 320px or less, use any of the images closest to a width of 280px i.e check the bucket of options we’ve got

This is pretty easy to reason about. Usually, a smaller image means the image has a smaller size. This implies lesser bandwidth for users on smaller screens. Everyone wins.

If as a beginner you apply these principles, you’ll definitely have a much faster web application than before. Web performance is a moving target. Don’t let your knowledge of the topic stop here. If you’re interested in more techniques, see the intermediate techniques I share below.

As an intermediate developer, I reckon you have experience in some of the basic web performance techniques. So, what can you do to take your knowledge to the next level?

When you get past being a newbie at making faster web applications, your mindset towards web performance changes.

Here are a few things to consider:

It is mostly agreed that users spend the majority of their time waiting for sites to respond to their input NOT waiting for the sites to load, but that’s not even all there is to it.

My argument is this: Users will wait for your sites to load, but they won’t be as patient if your site feels slow to operate i.e after initial load.

That’s arguable, isn’t it?

I’ve browsed the internet on horrible internet connections. I’ve seen sites take tens of seconds to load. It’s no longer that much of a big deal. I’ll wait. However, when your site loads, I expect it to “work fast”. That’s the deal breaker for me.

Don’t get me wrong. It is super important for your web apps to load fast, however, my argument still holds. Psychologically, a user will be more patent with you as your web page loads than they’d be whey actually using your website. Users expect your site to work smoothly and interactively.

Somewhere in your dev mind, begin to think of not only making your web apps load fast but work and feel fast.

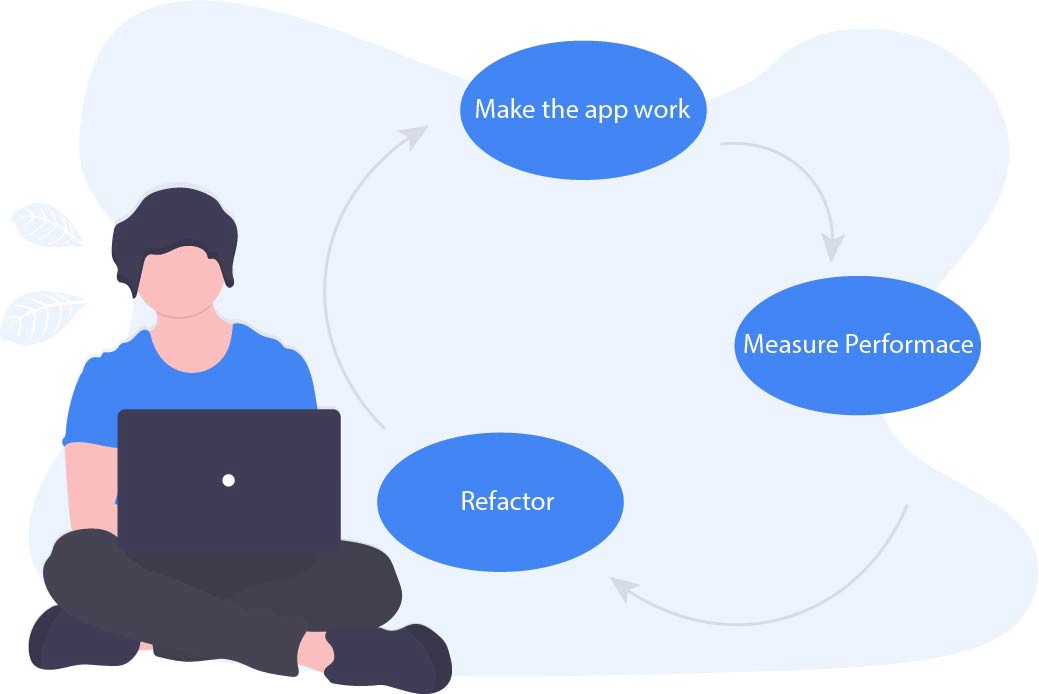

In test driven performance, unlike TDD, you don’t “write tests”. The process is graphically explained below.

When building web apps, usually I’ll perform “generic” performance techniques on whatever project I’m working on BUT will measure actual user performance to find specific bottlenecks as all web applications are inherently different.

So, how best do you measure performance?

When you first get introduced to measuring performance, your best bet is to use some tool like the Chrome dev tools. This is fine until you choose to take even more diverse and accurate measurements from real world users.

Below’s how I see this.

A beginner will mostly measure performance as they develop their applications. They’ll do this with the aid of the browser development tools available e.g. the Chrome DevTools.

An intermediate developer understands that measuring performance solely on their computer isn’t a complete representation of the performance actual users perceive, so they take things a notch higher by taking real user measurements.

It’s a little tricky to get this right, but the concept is simple.

You write a measuring script that ships with your application. As the user loads your web page, the script begins to read certain measurement metrics and sends them over to an analytics service e.g. Google Analytics (GA). It doesn’t have to be GA — but GA is a pretty popular option for this purpose.

For a practical example of how to measure performance metrics from real users, check out this Google CodeLab.

On your analytics server, you’ll be presented with a distributed performance measurement across multiple users from different regions, under varying network conditions and with different computer processing power. This is what makes real user measurement very powerful.

Your app’s load time is a culmination of the load times from various users under different conditions. Always remember that.

Users are humans, and humans tend to have some common behaviours. Interestingly, the knowledge of these behaviours help you build apps that resonate a little more with humans.

Enough of the “human” talk, and below’s an example of what I mean.

Have you ever wondered why many lifts have mirrors? Think carefully about this one.

What comes to mind is that they allow people to travel over 30 floors without feeling like they’d waited so long!

The same may be done on the web. When you aim for perceived speed, you can make your site “appear” to load faster!

While doing this, be sure to remember that actual speed still matters.

A few tips to try include:

Like how Medium lazy loads images, the crux of lazy loading images (as an example) is that a placeholder image is first displayed on the page. As this image loads, it is shown in different stages, from blurred to sharp. A similar concept may be used on text content, not just images.

A common technique is to display the most important part of the page to the user as soon as possible. Once they navigate to a page, show them something — preferably something useful. The user may not notice the rest of the page for a few more seconds if you do this well.

What’s typically done is to show the topmost visible content on the page i.e content on the initial viewport of the user’s device. This is better described as the above-the-fold content.

The content below the fold will not have been loaded at this time. However, you’d have provided important information to the user quickly. This leads to the next tip.

If you’ll be showing the above-the-fold content to the user first, then you’ll have to prioritise what goes in there.

What’s typically done is to inline the above-the-fold content in your HTML document. This way there’s no need for a server round trip. If you use a static site generator like Gatsby to develop static websites, then you’re in luck as they help automate this process. If you choose to do this yourself, you need to consider optimising the content (text or graphical) above-the-fold e.g via minification, and also choosing a tool for automating the process.

You’ve read documentations, belted years of experience, and you’re pretty confident you can make any website fast.

Kudos!

As an advanced developer, most performance techniques do not elude you. You know how they work, and why they are important.

Even at this level, I’ve got some interesting considerations for you.

Consider how we all approach loading performance. A user visits your web application on a very slow network, instead of letting them painfully receive all bytes of resources at once, you display the most important parts of the page first.

The techniques to achieve this include prioritising the above-the-fold content on the page and making the first meaningful paint count.

This is great and works — for now, but not without a flaw.

The problem here is that we’ve assumed (for the most part) that the most important part of the page to show to the user (while their pitiable internet sucks, or why they’re on a low-end CPU machine) is the above-the-fold content.

That’s an assumption, but how true is it?

This may be true most times, but assumptions themselves are inherently flawed.

Let me walk you through an example of how I use Medium.

First, I visit Medium every single day. It’s right there in the league of my most visited sites.

Every single time I visit medium, I indeed visit the homepage at www.medium.com.

So, here’s what happens when you visit on a slow connection.

They indeed take laudable performance measures to make sure the load time doesn’t linger on forever.

In case you didn’t take note, here’s the actual order in which they progressively render the content on the homepage.

As expected, the content above-the-fold is prioritised. The initial sets of articles are the Medium membership previews, then my notification count comes up, then new articles from my network is rendered and lastly, articles picked by the editors.

So, what’s the harm in this seemingly perfect progressive render?

The major question is, how was the order for rendering these items determined? At best it’s an assumption based on studying “most” users. It’s not a personal solution, is it just another generic solution?

If any thoughts were put into studying actual usual behaviour, then some of these would have been obvious over time:

I get a lot of notifications. There’s no way I’ll seat through reading hundred and sometimes thousands of notifications. I trust the important notifications to make it to my email and I only respond to those. The only time I click the notifications badge is to get rid of whatever figure is there. So it starts counting from zero again.

Even though this is placed above the fold, it’s really not important to me.

This is the first content I get shown (on a super slow network) and yet I almost never read them.

Why, you ask?

I write and read a lot of Medium articles. When Medium began allowing for authors to be paid on the platform, I tried signing up but I couldn’t. It had something to do with my country not been accepted into the program. So, I gave that up.

At the time, I assumed if they wouldn’t let me get paid as an author, why would they let me pay them to be a premium reader?

That’s the reason I’m not a premium Medium user. If they fix(ed) the issue I’ll look into subscribing. For this reason, I can’t read past 3 member-only articles a month (except I open them up in incognito browser mode).

Over time, I’ve just trained my eyes and mind to read the catchy titles at the top of the homepage and just completely ignore them.

Above-the-fold content yet not the most useful to me.

The content rendered almost lastly appears to be the content I seek almost every time I hit the homepage. I want to know what’s new from my network. I skim through and read at least one interesting article.

Essentially, the content that means something to me happens to be the last to show up. The implication is that the first meaningful paint other than for signaling visual feedback, it isn’t that useful to me.

This behaviour is even worse on a mobile phone. The first content that fills the entire screen while loading is not important to me, and I have to scroll down to find the new articles from my network — the actual resource that means something to me.

Every problem has a solution. This is only an idea I have conceived, and for which I’m working on a technical implementation. The crux of the matter is somewhat simple. There’s a need to take the application of Machine Learning further than just personalising user stories, feeds and suggestions. We can make web performance better as well.

What I’d have preferred as a user is to make the first meaningful paint actually count by making it personalised. Personalised to show what’s most important to me. Yes, me. Not some generic result.

If you’re like me and question new subject matters a lot, I answer a few concerns you may have about this proposed approach.

Our current solution (such as Medium’s) to optimising above-the-fold content is great. It does work.

A user just has to wait a few seconds but in the meantime they get visual cue that content is being loaded. That’s kinda good enough, but is it great? Is it the best we can do as a community?

If a personalised approach to rendering the first meaningful paint and optimising above-the-fold content were taken, will this be too much technical responsibility for so little gain?

Maybe not. So is it worth it?

Yes, from a user’s perspective. Particularly if you serve a global user base with users in every part of the world NOT just areas where people boast of having blazing fast internet.

You’ll end up delivering performance with personalisation — The feeling that this “product” knows me well.

This also opens doors to even better performance techniques such as accurate preloading and prefetching of resources before the user has initiated a request. Based off of the user’s repeated usage, you can now make a nearly accurate decision using machine learning.

I do think as a community we’re doing great on web performance. I think there’s room for improvement too. I also think we need to think this way to get real progressive results.

What would web performance be like in the next 5 years, 10 years? Stale or better?

Regardless of your skill level, go and develop fast web applications.

Install LogRocket via npm or script tag. LogRocket.init() must be called client-side, not

server-side

$ npm i --save logrocket

// Code:

import LogRocket from 'logrocket';

LogRocket.init('app/id');

// Add to your HTML:

<script src="https://cdn.lr-ingest.com/LogRocket.min.js"></script>

<script>window.LogRocket && window.LogRocket.init('app/id');</script>

Hey there, want to help make our blog better?

Join LogRocket’s Content Advisory Board. You’ll help inform the type of content we create and get access to exclusive meetups, social accreditation, and swag.

Sign up now

Not sure if low-code is right for your next project? This guide breaks down when to use it, when to avoid it, and how to make the right call.

Compare Firebase Studio, Lovable, and Replit for AI-powered app building. Find the best tool for your project needs.

Discover how to use Gemini CLI, Google’s new open-source AI agent that brings Gemini directly to your terminal.

This article explores several proven patterns for writing safer, cleaner, and more readable code in React and TypeScript.

One Reply to "How to make any website faster"

You can try Litespeed webserver and lscache plugin for more fast site loading and load balancing.