The InterPlanetary File System (IPFS) is a distributed system protocol and peer-to-peer network for storing, sharing, and accessing files, websites, applications, and data.

IPFS uses content-addressing to uniquely identify each file in a decentralized global namespace, connecting every computing device present in the network. For example, your friend’s computing device can give the results of your search, and your computing device can give results of the search of different computing device nearby.

In this tutorial, we’ll learn how to improve the speed and efficiency of our IPFS applications through garbage collection. First, we’ll cover some fundamentals like how garbage collection works and what it is used for. Then, we’ll look at both automatic and manual garbage collection, as well as garbage collection in IPFS Desktop. Let’s get started!

The Replay is a weekly newsletter for dev and engineering leaders.

Delivered once a week, it's your curated guide to the most important conversations around frontend dev, emerging AI tools, and the state of modern software.

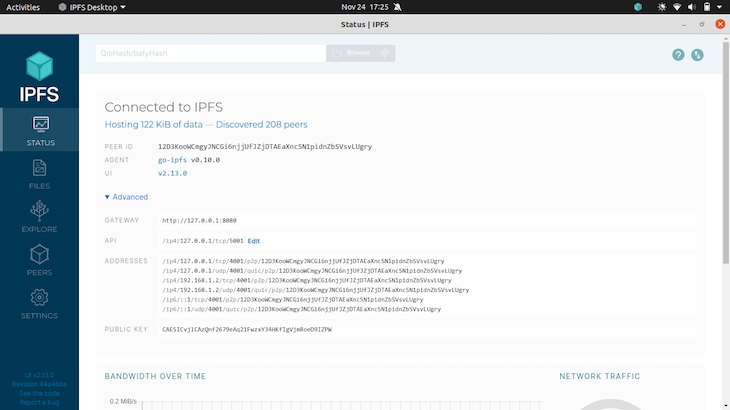

An IPFS network node is the computing device that is participating in the distributed, decentralized network.

IPFS preserves computing device history by allowing users to store data while minimizing fault control, the risk of that data being lost or accidentally deleted. IPFS keeps every version of the file a user stores to set up a resilient network for mirroring data, establishing its goal of planetary distributed decentralized nodes.

Every node on the IPFS network automatically caches resources that are downloaded by the users and makes those resources available for other nodes, i.e., computing devices on the distributed decentralized network. The system depends on these nodes being able to cache and share the downloaded resources within the network.

The resources of this distributed decentralized network are made by the resource of every computing device participating in this system network. The resources are finite, even if planetary, and they should be used as efficiently as possible for efficient and quick transactions.

Let’s consider one important resource, storage. Storage for the distributed, decentralized system is finite, limited by its nodes, or the participating computing device. To make room for higher priority resources, we should clear out some of the previously cached resources, like downloaded files, webpages, or lookups. The process of cleaning the unwanted items in the system storage is known as garbage collection.

In general, garbage collection refers to collecting, labeling, and removing unwanted or empty resources scattered over a network. Garbage collection is an automatic type of resource management used widely throughout software development. The garbage collectors, often referred to as gc’s, reclaim resources occupied by allocated objects that are no longer required or not in use. The IPFS system also runs scheduled garbage collection.

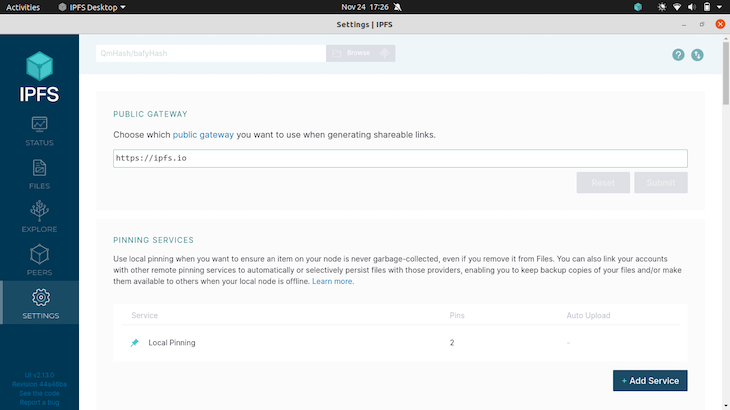

Let’s imagine that a user wants to store a mirror of LogRocket’s Javascript SDK API on the IPFS system, ensuring that the data required persists, is permanently stored, and won’t be deleted during garbage collection. In IPFS terms, this is referred to as pinning to one or more IPFS nodes.

Pinning establishes control over the resources. In our case, it is storage, and more specifically, disk space. The established control is used to pin any unit or content required by the user or participating node, keeping it on IPFS permanently.

Every IPFS node has its own independent set of pins, and every user can decide what should be pinned on their node. If a user has multiple nodes, they can coordinate pinning using an IPFS cluster.

In our example, IPFS uses garbage collection to free up storage and disk space on the IPFS node by deleting data that is no longer required.

Inside of /home/username/.config/IPFS Desktop, run the code below:

cat config.json

{

"ipfsConfig": {

"type": "go",

"path": "/home/username/.ipfs",

"flags": [

"--agent-version-suffix=desktop",

"--migrate",

"--enable-gc",

"--routing=dhtclient"

]

},

You’ll find the --enable-gc flag set.

There are two types of garbage collection enablement in IPFS, automatic and manual. Garbage collection can be automatically enabled by setting a few parameters in the configuration file, which we’ll review now.

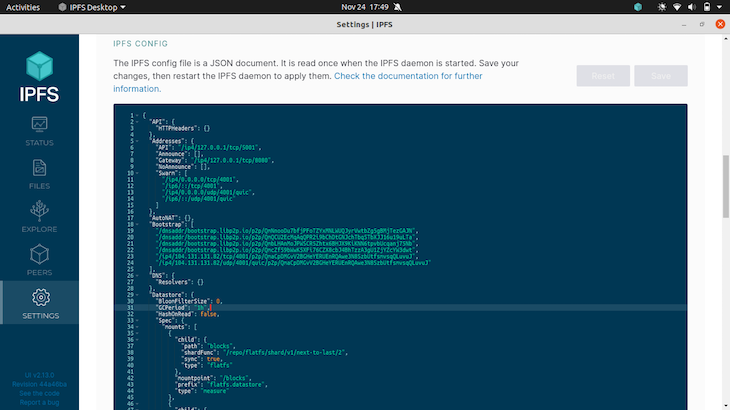

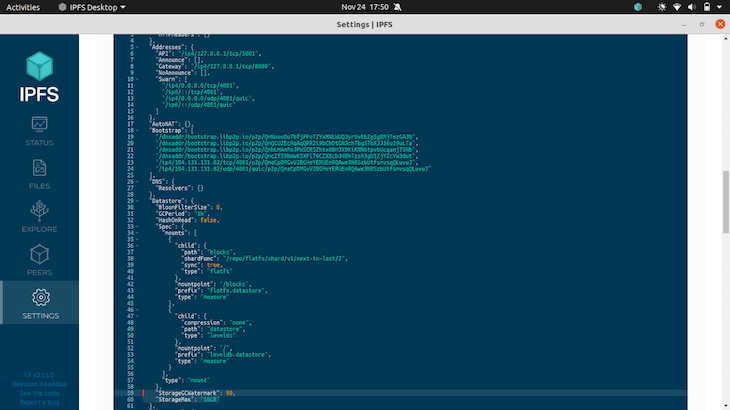

The IPFS garbage collector is configured in the DATASTORE section of the ipfs config file. The settings and keys related to the IPFS garbage collector are as follows:

GCPeriod: Defines how frequently garbage collection should run if automatic garbage collection is enabled. The default is set to 1 hourStorageGCWatermark: Defines the percentage of the StorageMax value that will trigger the automatic garbage collection, as long as the daemon is running with automatic garbage collection enabled. The default value is 90ipfs config is present inside /home/username/.ipfs:

{

"API": {

"HTTPHeaders": {}

},

"Addresses": {

"API": "/ip4/127.0.0.1/tcp/5001",

"Announce": [],

"Gateway": "/ip4/127.0.0.1/tcp/8080",

"NoAnnounce": [],

"Swarm": [

"/ip4/0.0.0.0/tcp/4001",

"/ip6/::/tcp/4001",

"/ip4/0.0.0.0/udp/4001/quic",

"/ip6/::/udp/4001/quic"

]

},

"AutoNAT": {},

"Bootstrap": [

"/dnsaddr/bootstrap.libp2p.io/p2p/QmNnooDu7bfjPFoTZYxMNLWUQJyrVwtbZg5gBMjTezGAJN",

"/dnsaddr/bootstrap.libp2p.io/p2p/QmQCU2EcMqAqQPR2i9bChDtGNJchTbq5TbXJJ16u19uLTa",

"/dnsaddr/bootstrap.libp2p.io/p2p/QmbLHAnMoJPWSCR5Zhtx6BHJX9KiKNN6tpvbUcqanj75Nb",

"/dnsaddr/bootstrap.libp2p.io/p2p/QmcZf59bWwK5XFi76CZX8cbJ4BhTzzA3gU1ZjYZcYW3dwt",

"/ip4/104.131.131.82/tcp/4001/p2p/QmaCpDMGvV2BGHeYERUEnRQAwe3N8SzbUtfsmvsqQLuvuJ",

"/ip4/104.131.131.82/udp/4001/quic/p2p/QmaCpDMGvV2BGHeYERUEnRQAwe3N8SzbUtfsmvsqQLuvuJ"

],

"DNS": {

"Resolvers": {}

},

"Datastore": {

"BloomFilterSize": 0,

"GCPeriod": "1h",

"HashOnRead": false,

"Spec": {

"mounts": [

{

"child": {

"path": "blocks",

"shardFunc": "/repo/flatfs/shard/v1/next-to-last/2",

"sync": true,

"type": "flatfs"

},

"mountpoint": "/blocks",

"prefix": "flatfs.datastore",

"type": "measure"

},

{

"child": {

"compression": "none",

"path": "datastore",

"type": "levelds"

},

"mountpoint": "/",

"prefix": "leveldb.datastore",

"type": "measure"

}

],

"type": "mount"

},

"StorageGCWatermark": 90,

"StorageMax": "10GB"

},

"Discovery": {

"MDNS": {

"Enabled": true,

"Interval": 10

}

},

.......

ipfs repo gc performs a garbage collection sweep on the local set of stored objects, removing the ones that are not pinned to reclaim disk space.

To manually begin garbage collection, run the code below:

ipfs repo gc removed QmWAtenQY9McR3bE3QCad8tAeCeDqFHzQVtTKZoZC5jwTi removed QmUB3JuaedWV8MVCfkYo3iUHsBVezD6RKhi4Uh916YEmA4 removed QmPsArmopVpaiMF6MetLP89GfAhCNxGja141f58FPtXWrs removed QmdDFu5hKF6yRyS8msPKaxYcaUwaoW3RRWV2Nv8ZjGzY5e removed QmX4vsv8KLGhV2bj873cMZjqGUQqFgWtvsiNVfisrmKjT7

To enable automatic garbage collection, use the --enable-gc command flag while starting the IPFS daemon:

ipfs daemon --enable-gc > Initializing daemon... > go-ipfs version: 0.10.0 > Repo version: 10 > ...

If ipfs daemon is already running, it might return the following error:

ipfs daemon --enable-gc > Error: ipfs daemon is running. please stop it to run this command

If the error occurs, run and re-run the --enable-gc command:

ipfs shutdown ipfs daemon --enable-gc > Initializing daemon... > go-ipfs version: 0.10.0 > Repo version: 10 > ...

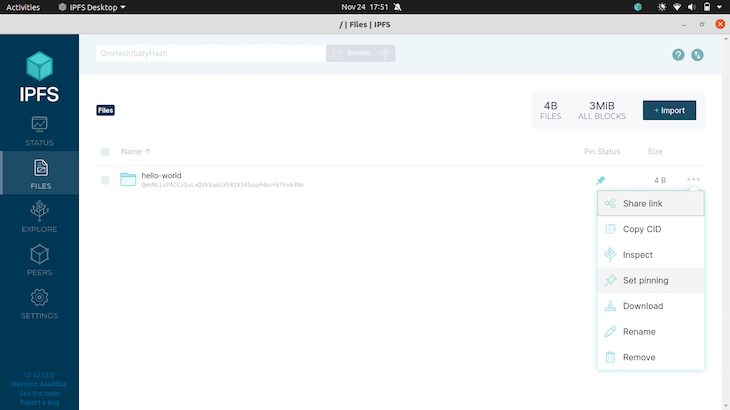

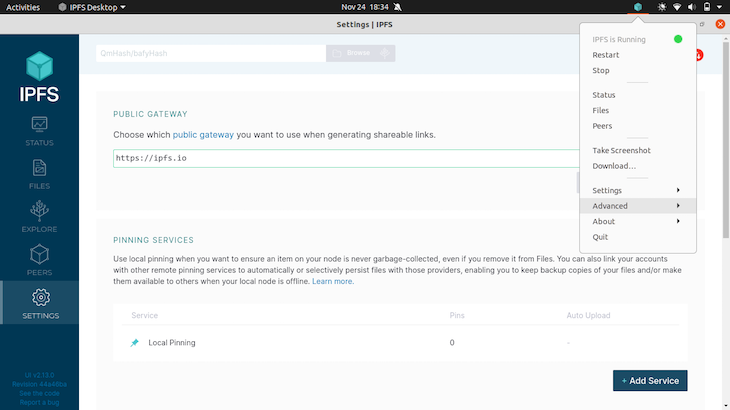

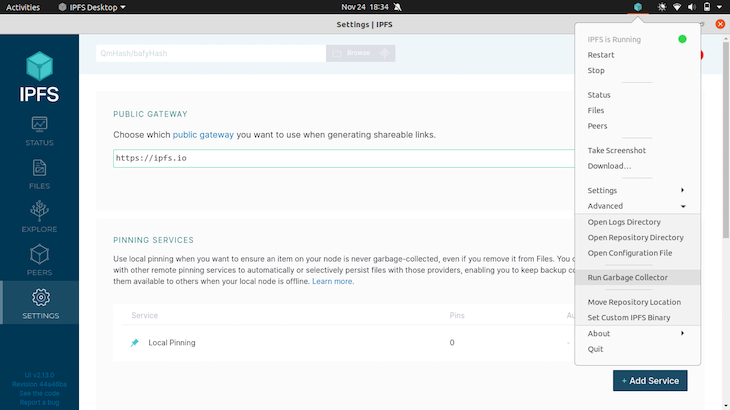

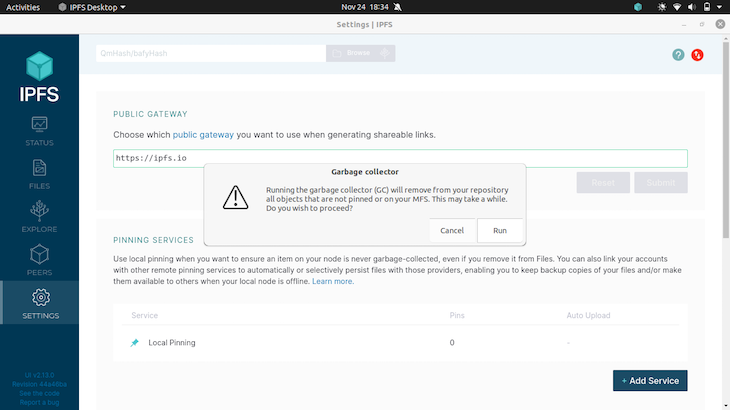

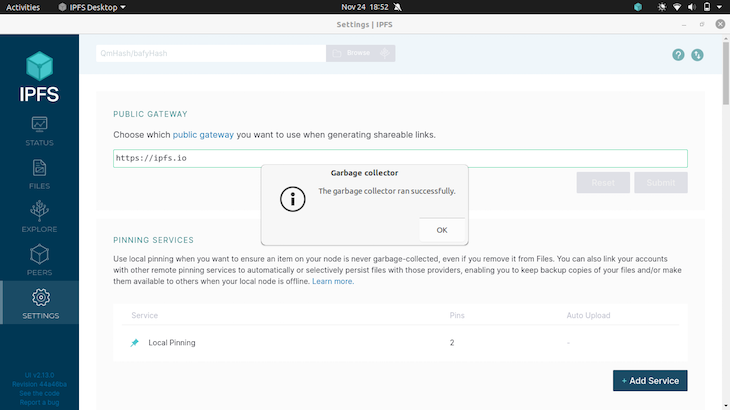

In IPFS Desktop, garbage collection can be triggered by clicking on the taskbar icon on the IPFS Desktop application. Go to Advanced → Run Garbage Collector:

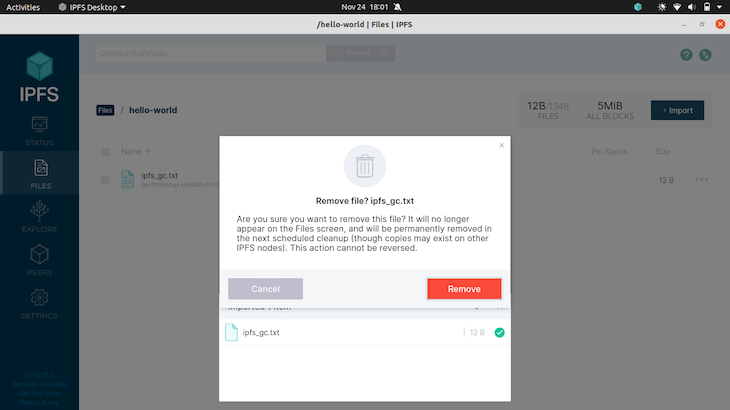

If you added a file to IPFS system, but you want to remove it, you should be sure of the following:

removed, which you can verify using ipfs repo gcGarbage collection can also be done in a cluster in IPFS system network:

ipfs-cluster-ctl --enc=json --debug ipfs gc 2021-11-19T13:27:06.210+0200 DEBUG cluster-ctl ipfs-cluster-ctl/main.go:143 debug level enabled 2021-11-19T13:27:06.211+0200 DEBUG apiclient client/request.go:62 POST: http://127.0.0.1:9094/ipfs/gc?local=false

The endpoint is POST /ipfs/gc, which will trigger garbage collection on all peers in the cluster sequentially. To trigger garbage collection only in the IPFS peer associated with the cluster peer receiving the request, you should add ?local=true.

Now, you should be able to perform garbage collection in your own IPFS application. Under the circumstances detailed above, you can free up space for more high-priority resources and speed up your application overall.

In this tutorial, we covered the fundamentals of what garbage collection is and why it is important. We also covered the available options for IPFS garbage collection, including automatic, manual, scheduled, and IPFS Desktop garbage collection. I hope you enjoyed this tutorial!

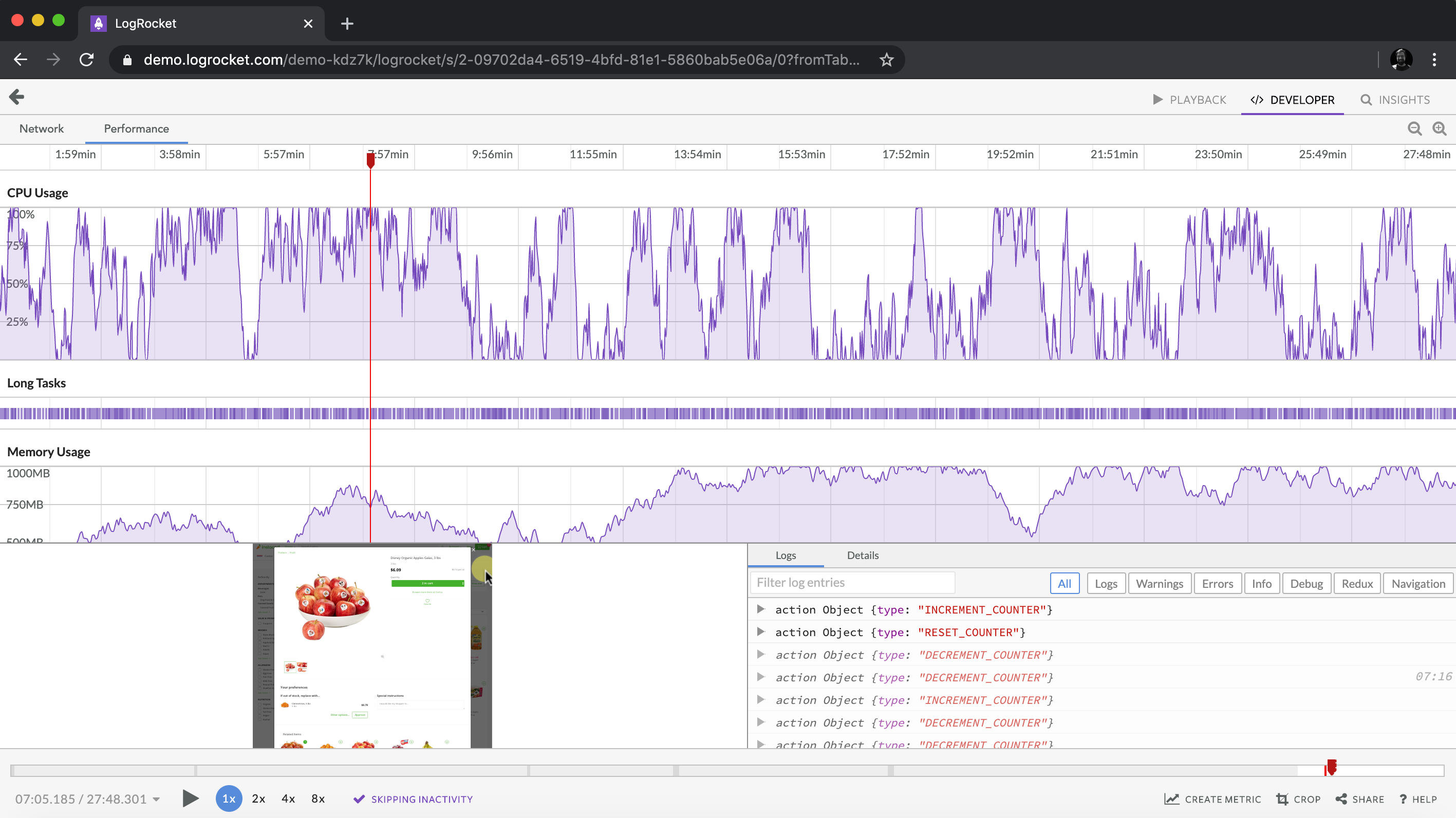

Client-side issues that impact users’ ability to activate and transact in your apps can drastically affect your bottom line. If you’re interested in monitoring UX issues, automatically surfacing JavaScript errors, and tracking slow network requests and component load time, try LogRocket.

LogRocket lets you replay user sessions, eliminating guesswork around why bugs happen by showing exactly what users experienced. It captures console logs, errors, network requests, and pixel-perfect DOM recordings — compatible with all frameworks.

LogRocket's Galileo AI watches sessions for you, instantly identifying and explaining user struggles with automated monitoring of your entire product experience.

Modernize how you debug web and mobile apps — start monitoring for free.

Learn how Vite+ unifies Vite, Vitest, Oxlint, Oxfmt, Rolldown, and Node.js management in one CLI.

AI companies are buying developer tools as coding agents turn runtimes, package managers, and linters into strategic infrastructure.

Learn how AI-assisted development governance uses rules, agents, hooks, and protocols to help AI coding tools produce safer, more consistent code.

A step-by-step guide to building your first MCP server using Node.js, covering core concepts, tool design, and upgrading from file storage to MySQL.

Hey there, want to help make our blog better?

Join LogRocket’s Content Advisory Board. You’ll help inform the type of content we create and get access to exclusive meetups, social accreditation, and swag.

Sign up now