Editor’s note: This post was last updated 13 July 2022 to reflect Deno v1.22.0 and Node.js v18.x.

Created by the minds behind Node.js, Deno, a JavaScript runtime, is similarly gaining traction among developers. By providing features that Node.js failed to deliver, like security, modules, and dependencies, Deno is proving to be as powerful as its predecessor.

Built on top of the robust Google V8 Engine with out-of-the-box TypeScript support, Deno also supports JavaScript. In this article, we’ll use JavaScript to set up a simple app with Deno.

Deno was created under a few conditions. First off, it’s secure, meaning that its default execution is based in a sandbox environment. There’s no access from runtime to things like the network or file system. When your code tries to access these resources, you’re prompted to allow the action.

Deno loads modules from URLs, like the browser, allowing you to use decentralized code as modules and import them directly into your source code without having to worry about registry centers. It’s also browser-compatible, for example, if you’re using ES modules.

Finally, Deno is TypeScript-based. If you already work with TypeScript, it’s perfect for you; its very straightforward without any extra settings. If you don’t work with TypeScript, that’s no problem. You can also use it with plain JavaScript.

You can read more about Deno in its official documentation. In this article, we’ll focus more on the how to. Specifically, we’ll cover how to create a REST API from scratch using only JavaScript, Deno, and a connection to a Postgres database. The application we’ll develop is a basic CRUD over a domain of different beers.

First, you’ll need to set up all the required tools. For this article, you’ll need:

Installing Deno is very simple. However, because Deno is constantly undergoing updates, we’ll focus this article on version 1.22.0, so it’ll always work no matter what new features are released.

For this, run the following command:

// With Shell curl -fsSL https://deno.land/x/install/install.sh | sh -s v1.22.0 // With PowerShell $v="1.9.2"; iwr https://deno.land/x/install/install.ps1 -useb | iex

Then, run the command deno --version to check if the installation worked. You should see something like this:

deno 1.22.0 (release, x86_64-apple-darwin) v8 10.0.139.17 typescript 4.6.2

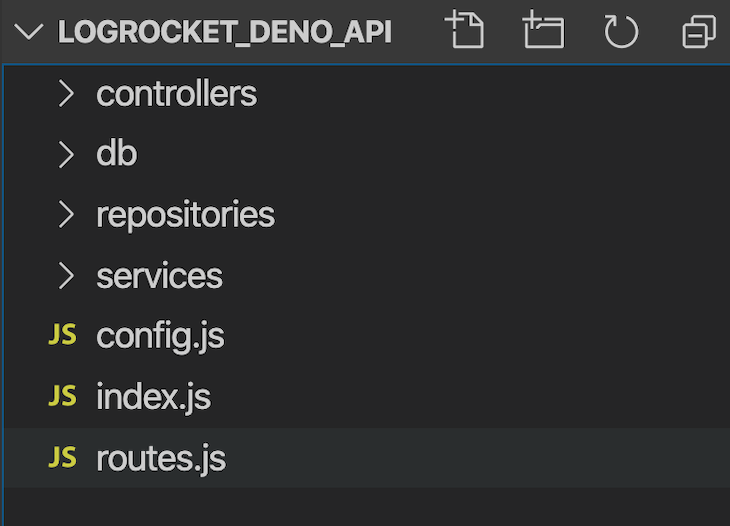

Next, let’s create the project structure, including initial files and folders. Inside a folder of your preference, create the same structure as seen in the image below:

controllers holds the JavaScript files that will handle the arriving requests, the calls to the services and layers below, and finally, the delivery of the responses. All of those objects are inherited from Deno, so you don’t need to worry about whether you’ll need to handle requests and responses manually.

db is the folder hosting our SQL creation script and the direct connection to our Postgres database. repositories are JavaScript files that will handle the management of the database operations. Each create, delete, or update will take place in its logic in this folder.

Finally, services are the files that will handle the business logic of our operations, like validations, transformations over the data, and more.

Let’s start with the code for our first and most important file, index.js. Take a look at the following code:

import { Application } from "https://deno.land/x/oak/mod.ts";

import { APP_HOST, APP_PORT } from "./config.js";

import router from "./routes.js";

import _404 from "./controllers/404.js";

import errorHandler from "./controllers/errorHandler.js";

const app = new Application();

app.use(errorHandler);

app.use(router.routes());

app.use(router.allowedMethods());

app.use(_404);

console.log(`Listening on port:${APP_PORT}...`);

await app.listen(`${APP_HOST}:${APP_PORT}`);

We need a web framework to deal with the details of the request and response handling, thread management, errors, etc. For Node.js, it’s common to use Express or Koa, however, as we’ve seen, Deno doesn’t support Node.js libraries.

We‘ll use another library inspired by Koa called Oak, a middleware framework for Deno’s net server. Note that we’re using Oak v10.60, which is the right version for Deno 1.0.0. If you don’t provide a version, Deno will always fetch the latest one, which can have breaking changes and mess up your project. So be careful!

Deno has a middleware framework inspired by Koa, and its middleware router was inspired by koa-router. Its usage is very similar to Express, as you can see from the code listing. In the first line, we’re importing the TypeScript module directly from the deno.land URL.

Later, we’ll further configure all of the imports. In Oak, the Application class is where everything starts.

We instantiate it and add the error handler, the controllers, the routing system, and, ultimately, call the listen() method to start the server passing the URL, the host, and the port. Below is the code for config.js; place it in the root of the project:

export const APP_HOST = Deno.env.get("APP_HOST") || "127.0.0.1";

export const APP_PORT = Deno.env.get("APP_PORT") || 4000;

Very familiar so far, isn’t it? Let’s work on the routing now. Like with Express, we need to establish the routers that will redirect our requests to the proper JavaScript functions that, in turn, will handle them, store, or search for data and return the results.

Take a look at the code for routes.js, which is also in the root folder:

import { Router } from "https://deno.land/x/oak/mod.ts";;

import getBeers from "./controllers/getBeers.js";

import getBeerDetails from "./controllers/getBeerDetails.js";

import createBeer from "./controllers/createBeer.js";

import updateBeer from "./controllers/updateBeer.js";

import deleteBeer from "./controllers/deleteBeer.js";

const router = new Router();

router

.get("/beers", getBeers)

.get("/beers/:id", getBeerDetails)

.post("/beers", createBeer)

.put("/beers/:id", updateBeer)

.delete("/beers/:id", deleteBeer);

export default router;

So far, nothing should be working yet. Don’t worry, we still need to configure the rest of the project before starting it up.

This last listing shows that Oak will also take care of the routing system for us. The Router class, more specifically, will be instantiated to allow the use of the correspondent methods for each HTTP GET, POST, PUT, and DELETE operation.

The imports at the beginning of the file correspond to each of the functions that will handle the respective request. You can decide whether you prefer it this way, or if you’d rather have everything in the same controller file.

Before we proceed with more JavaScript code, we need to set up the database. Make sure you have the Postgres server installed and running at your localhost. Connect to it and create a new database called logrocket_deno.

In the public schema, run the following create script:

CREATE TABLE IF NOT EXISTS beers (

id SERIAL PRIMARY KEY,

name VARCHAR(50) NOT NULL,

brand VARCHAR(50) NOT NULL,

is_premium BOOLEAN,

registration_date TIMESTAMP

)

This script is also available at the /db folder of my version of the project. It creates a new table called beers to store the values of our CRUD.

Note that the primary key is auto-incremented via the SERIAL keyword to facilitate our job with the ID generation strategy. Now, let’s create the file that will handle the connection to Postgres. In the db folder, create the database.js file and add the following code:

import { Client } from "https://deno.land/x/postgres/mod.ts";

class Database {

constructor() {

this.connect();

}

async connect() {

this.client = new Client({

user: "postgres",

database: "logrocket_deno",

hostname: "127.0.0.1",

password: "postgres",

port: 5432

});

await this.client.connect();

}

}

export default new Database().client;

Make sure to adjust the connection settings according to your Postgres configurations. The config is pretty simple.

Deno has created deno-postgres, its PostgreSQL driver for Deno, based on node-postgres and pg. If you’re a Node.js user, you’ll be familiar with the syntax. Just be aware that the settings change slightly depending on the database you use.

We’re passing the setting object as a Client parameter. In MySQL, however, it goes directly into the connect() function.

Inside the repositories folder, we’ll create the beerRepo.js file, which will host the repositories to access the database through the file we’ve created above:

import client from "../db/database.js";

class BeerRepo {

create(beer) {

return client.queryArray

`INSERT INTO beers (name, brand,is_premium,registration_date) VALUES (${beer.name}, ${beer.brand}, ${beer.is_premium},${beer.registration_date})`;

}

selectAll() {

return client.queryArray`SELECT * FROM beers ORDER BY id`;

}

selectById(id) {

return client.queryArray`SELECT * FROM beers WHERE id = ${id}`;

}

update(id, beer) {

const latestBeer = this.selectById(id);

return client.queryArray`UPDATE beers SET name = ${beer.name !== undefined ? beer.name : latestBeer.name}, brand = ${beer.brand !== undefined ? beer.brand : latestBeer.brand}, is_premium = ${beer.is_premium !== undefined ? beer.is_premium : latestBeer.is_premium} WHERE id = ${id}`;

}

delete(id) {

return client.queryArray`DELETE FROM beers WHERE id = ${id}`;

}

}

export default new BeerRepo();

Import the database.js file that connects to the database. The rest of the file is just database-like CRUD operations.

Like every other major database framework, to prevent SQL injection, Deno allows us to pass parameters to our SQL queries as well use tagged template strings. client.QueryArray will sanitize the input internally.

We’ll use the queryArray() function every time we want to access or alter data in the database. We’ll also pay special attention to our update method. That’s one of many possible strategies you can use to update only what the user is passing.

First, we’re fetching the user by the ID and storing it in a local variable. Then, when checking if each beer attribute is within the request payload, we can decide whether to use it or the stored value.

Now that our repository is set, we can move on to the services layer. Inside of the services folder, create the beerService.js file and add the following code:

import beerRepo from "../repositories/beerRepo.js";

export const getBeers = async () => {

const beers = await beerRepo.selectAll();

return beers.rows.map(beer => {

return beers.rowDescription.columns.reduce((acc,el, i) => {

acc[el.name] = beer[i];

return acc

},{});

});

};

export const getBeer = async beerId => {

const beers = await beerRepo.selectById(beerId)

if(!beers || beers?.length===0) return null

return beers.rowDescription.columns.reduce((acc,el, i) => {

acc\[el.name] = beers.rows[0\][i];

return acc

},{});

};

export const createBeer = async beerData => {

const newBeer = {

name: String(beerData.name),

brand: String(beerData.brand),

is_premium: "is_premium" in beerData ? Boolean(beerData.is_premium) : false,

registration_date: new Date()

};

await beerRepo.create(newBeer);

return newBeer.id;

};

export const updateBeer = async (beerId, beerData) => {

const beer = await getBeer(beerId);

if (Object.keys(beer).length === 0 && beer.constructor === Object) {

throw new Error("Beer not found");

}

const updatedBeer = {...beer,...beerData};

beerRepo.update(beerId, updatedBeer);

};

export const deleteBeer = async beerId => {

beerRepo.delete(beerId);

};

This is one of the most important files where we interface with the repository and receive calls from the controllers.

Each method also corresponds to one of the CRUD operations. Because the Deno database nature is inherently asynchronous, it always returns a promise. Therefore, we need to await until it finishes in our synchronous code.

In addition, the return is an object that does not correspond to our exact business object Beer, so we have to transform it into an understandable JSON object. getBeers will always return an array, and getBeer will always return a single object. The structure of both functions is very similar.

The beers result is an array of arrays because it encapsulates a list of possible returns for our query. Each return is an array as well, given that each column value comes within this array. In turn, rowDescription stores the information, including the names, of each column the results have.

Some other features, like validations, also take place here. In the updateBeer function, you can see that we’re always checking if the given beerId exists in the database before proceeding with updating. Otherwise, an error will be thrown. Feel free to add whichever validations or additional code you want.

Now, we’ll create the handlers of our requests and responses. It’s important for our input and output validations to adhere to this layer. Let’s start with the error management files in the index.js file.

In the controllers folder, create the files 404.js and errorHandler.js and add the respective code snippets below:

//404.js

export default ({ response }) => {

response.status = 404;

response.body = { msg: "Not Found" };

};

//errorHandler.js

export default async ({ response }, nextFn) => {

try {

await nextFn();

} catch (err) {

response.status = 500;

response.body = { msg: err.message };

}

};

In the first code block, we’re just exporting a function that will take care of business exceptions whenever we throw them, like HTTP 404.

The second code snippet will take care of any other type of unknown errors that may happen in the application lifecycle, treat them like HTTP 500, and send the error message in the response body.

Now, let’s get to the controllers. Let’s start with the getters. Below is the content for getBeers.js:

import { getBeers } from "../services/beerService.js";

export default async ({ response }) => {

response.body = await getBeers();

};

Each controller operation must be async. Each controller operation receives either one or both request and response objects as parameters, which are intercepted by the Oak API and preprocessed before arriving at the controller or getting back to the client caller. Regardless of the type of logic you put in there, don’t forget to set the response body since it is the result of your request.

Below is the code for getBeerDetails.js:

import { getBeer } from "../services/beerService.js";

export default async ({ params, response }) => {

const beerId = params.id;

if (!beerId) {

response.status = 400;

response.body = { msg: "Invalid beer id" };

return;

}

const foundBeer = await getBeer(beerId);

if (!foundBeer) {

response.status = 404;

response.body = { msg: `Beer with ID ${beerId} not found` };

return;

}

response.body = foundBeer;

};

This content is similar to our content for getBeers.js, except for the validations. Because we’re receiving the beerId as a parameter, it’s good to check if it’s filled. If the value for that parameter doesn’t exist, send a corresponding message in the body. Next, we’ll work on the creation file; below is the content for the createBeer.js file:

import { createBeer } from "../services/beerService.js";

export default async ({ request, response }) => {

if (!request.hasBody) {

response.status = 400;

response.body = { msg: "Invalid beer data" };

return;

}

const { name, brand, is_premium } = await request.body().value;

console.log(await request.body({ type: "json" }).value);

console.log(name);

if (!name || !brand) {

response.status = 422;

response.body = { msg: "Incorrect beer data. Name and brand are required" };

return;

}

const beerId = await createBeer({ name, brand, is_premium });

response.body = { msg: "Beer created", beerId };

};

Again, a few validations take place to guarantee that the input data is valid regarding required fields. Validations also confirm that a body comes with the request.

The call for the createBeer service function passes each argument individually. If the beer object increases in its number of attributes, it would not be wise to maintain such a function.

You can come up with a model object instead, which would store each one of your beer’s attributes and be passed around the controllers and service methods. Below is the code for our updateBeer.js file:

import { updateBeer } from "../services/beerService.js";

export default async ({ params, request, response }) => {

const beerId = params.id;

if (!beerId) {

response.status = 400;

response.body = { msg: "Invalid beer id" };

return;

}

if (!request.hasBody) {

response.status = 400;

response.body = { msg: "Invalid beer data" };

return;

}

const { name, brand, is_premium } = await request.body().value;

await updateBeer(beerId, { name, brand, is_premium });

response.body = { msg: "Beer updated" };

};

As you can see, it has almost the same structure, and the difference is in the params config. Because we’re not allowing every attribute of a beer object to be updated, we limit which ones will go down to the service layer. The beerId must also be the first argument since we need to identify which database element to update.

Finally, the code for deleteBeer.js is as follows:

import { deleteBeer, getBeer } from "../services/beerService.js";

export default async ({

params,

response

}) => {

const beerId = params.id;

if (!beerId) {

response.status = 400;

response.body = { msg: "Invalid beer id" };

return;

}

const foundBeer = await getBeer(beerId);

if (!foundBeer) {

response.status = 404;

response.body = { msg: `Beer with ID ${beerId} not found` };

return;

}

await deleteBeer(beerId);

response.body = { msg: "Beer deleted" };

};

Note how similar it is to the others. Again, if you feel it is too repetitive, you can mix these controller codes into one single controller file. Doing so would reduce the amount of code because the common code would be kept together in a function, for example. Now, let’s test it.

To run the Deno project, go to your command line prompt. In the root folder, issue the following command:

deno run --allow-net --allow-env index.js

Remember that Deno works with secure resources. To allow HTTP calls and access env variables, we need to explicitly ask, which we can do with these respective flags.

The logs will show Deno downloading all the dependencies our project needs. The message Listening on port:4000... must appear. To test the API, we’ll use the Postman utility tool, but feel free to use whichever one you prefer.

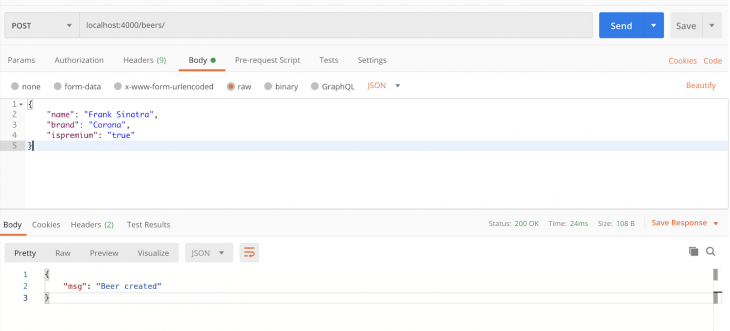

Below is an example of a POST creation in action:

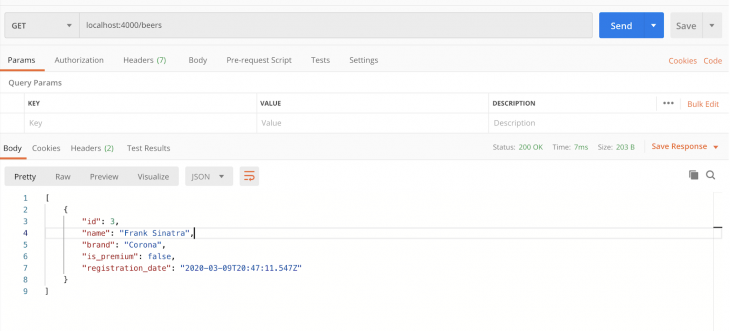

After that, go to the GET operation to list all the beers in the database:

I’ll leave the rest of the operation tests to you. You can also go to the database and check directly for the values to be inserted, updated, or deleted. The final code for this tutorial can be found at this GitHub repo.

We’ve finished a complete, functional CRUD-like API without having to use Node.js or a node_modules directory because Deno maintains the dependencies in cache. Every time you want to use a dependency, just state it through the code, and Deno will take care of downloading it. There’s no need for a package.json file.

If you want to use Deno with TypeScript, there’s no need to install TypeScript either. You can go ahead and start coding with TypeScript right away. Additionally, VS Code provides a very useful extension for Deno to help with autocompletion, module imports, formatting, and more. I hope you enjoyed this article, and be sure to leave a comment if you have any questions.

Hey there, want to help make our blog better?

Join LogRocket’s Content Advisory Board. You’ll help inform the type of content we create and get access to exclusive meetups, social accreditation, and swag.

Sign up now

Discover how to use Gemini CLI, Google’s new open-source AI agent that brings Gemini directly to your terminal.

This article explores several proven patterns for writing safer, cleaner, and more readable code in React and TypeScript.

A breakdown of the wrapper and container CSS classes, how they’re used in real-world code, and when it makes sense to use one over the other.

This guide walks you through creating a web UI for an AI agent that browses, clicks, and extracts info from websites powered by Stagehand and Gemini.

11 Replies to "How to create a REST API with Deno and Postgres"

For somebody having problems with “Deno.env it’s not a function” and/or “Uncaught InvalidData: data did not match any variant of untagged enum ArgsEnum”, Here is the solution: https://stackoverflow.com/questions/61757437/uncaught-invaliddata-data-did-not-match-any-variant-of-untagged-enum-argsenum

Thanks to https://stackoverflow.com/users/1119863/marcos-casagrande

> In order to prevent SQL injection — like every other major database framework — Deno allows us to pass parameters to our SQL queries as well.

Am I missing something or is the `beerRepo.update()` function *full* of SQL injections? Example:

query += ` SET name = ‘${beer.name}’` …

Yes, yes it is…. There’s no input sanitization whatsoever 🙈

deno run –allow-net –allow-env index.js

error: No such file or directory (os error 2)

I got this error, when i am trying run

Compile file:///home/zire/dcode/postgr/index.js

error: Uncaught AssertionError: Unexpected skip of the emit.

at Object.assert ($deno$/util.ts:33:11)

at compile ($deno$/compiler.ts:1170:7)

at tsCompilerOnMessage ($deno$/compiler.ts:1338:22)

at workerMessageRecvCallback ($deno$/runtime_worker.ts:72:33)

at file:///home/zire/dcode/postgr/__anonymous__:1:1

Found solution for my problem above I needed to downgrade deno version from v1.0.4 to v1.0.3 and from [email protected] to [email protected]

I have got to the github and gave this project a star. This project is easy to follow, to template or so. Thank you.

Hi guys, thanks very much for the comments. I’ve already addressed all of them both in the article and in the GitHub repo.

Please, let me know if there’s still any problems to you. 🙂

Great tutorial thanks! Hey would love to see a Typescript version of this project, anyone have an example of PostgreSQL + Deno + Typescript?

Hey folks, I’ve had a go at converting this project to Typescript. Made some minor improvements/changes along the way. Classes seem to cause problems when instantiating the database, “possible undefined value” errors, so I removed them as they aren’t really needed.

For any of you who are Typescript Savvy, I would love to get some feedback if I’ve gone about this the right way?

https://github.com/fredlemieux/deno-postgresql-example

Thanks!