Context engineering for IDEs is the practice of giving a coding agent the right project context before it starts exploring, planning, and editing your code. In practice, that usually means combining a repository-level AGENTS.md file with task-specific agent skills so the model understands your architecture, conventions, and constraints earlier in the session.

That matters because coding agents do not understand your codebase by default. They have to discover it by reading files, calling tools, and inferring structure. That discovery work costs tokens, slows down execution, and often leads to avoidable mistakes when key constraints are buried or never surfaced.

In this article, we’ll look at how AGENTS.md and agent skills affect agent behavior inside an IDE, where each one helps most, and what their limits look like in practice. To keep this grounded, I ran the same RBAC implementation task three ways: with no added context, with AGENTS.md, and with AGENTS.md plus a skill. The results show a clear tradeoff between speed, cost, and architectural quality.

If you’ve been following the broader wave of hands-on evaluations around agentic tooling, this fits alongside recent comparisons such as LogRocket’s look at whether splitting work across AI agents actually saves time.

The Replay is a weekly newsletter for dev and engineering leaders.

Delivered once a week, it's your curated guide to the most important conversations around frontend dev, emerging AI tools, and the state of modern software.

Context engineering is the deliberate practice of filling an LLM’s context window with the information most likely to help it solve a task correctly. In an IDE, that means giving a coding agent enough background awareness of the product, the codebase, and team conventions to make sound decisions without constantly re-discovering them.

LLMs do not automatically understand your repository. To reason well, they must inspect files, infer relationships, and piece together architecture from fragments. That process is expensive, and when the session resets, the understanding often disappears with it.

Good context engineering reduces that repeated discovery cost. Instead of rebuilding a mental model from scratch on every task, the agent starts with a clearer picture of the system it is operating in.

AGENTS.md and agent skillsA useful way to think about this is that context engineering shifts agent performance from improvisation toward controlled execution. That does not eliminate mistakes, but it does make the mistakes more legible and easier to prevent.

AGENTS.md?AGENTS.md is a Markdown file placed at the root of a repository to give a coding agent spatial awareness of the codebase. When an agent starts a session, one of its first steps is often to look for repository-level guidance files. If AGENTS.md is present and supported by the tool, it becomes part of the session context.

Think of it as the agent-facing counterpart to README.md. A good AGENTS.md explains what the project is, how it is structured, which conventions matter, and which boundaries the agent should respect.

A strong AGENTS.md usually includes:

This guidance persists in the repository across sessions, which makes it especially useful for recurring work. But it still needs to stay lean. A bloated AGENTS.md wastes context space and weakens the signal-to-noise ratio. The goal is orientation, not exhaustive documentation.

If AGENTS.md gives an agent a map of the codebase, agent skills give it reusable expertise for a specific kind of work. A skill is typically a folder that contains a SKILL.md file plus any supporting examples, templates, scripts, or reference material needed for that workflow.

You might have one skill for authentication changes, another for component scaffolding, and another for database migrations. The key difference from AGENTS.md is scope. AGENTS.md is broad and persistent. Skills are narrower and meant to load when relevant.

That progressive disclosure model is what makes skills attractive. Instead of front-loading every rule for every workflow, the agent pulls in the skill that matches the task at hand.

Skills are especially valuable when a codebase has repeatable workflows. For example, if every feature change should run a typecheck, then a lint pass, then a changelog update, a skill can package that process once so the agent can reapply it consistently.

AGENTS.md works in an IDEThe AGENTS.md file lives at the root of the project and generally does not need special configuration beyond IDE support. Many modern coding tools, including Cursor, VS Code-based environments, and terminal agents such as OpenCode, can surface this file at the start of an agent session. In this article, I primarily used OpenCode.

Claude Code uses CLAUDE.md for similar behavior rather than native AGENTS.md support. One practical workaround is to reference @AGENTS.md inside CLAUDE.md, which lets you maintain a single canonical guidance file while still exposing it to Claude Code.

When a new session begins, the agent reads its system prompt, the user prompt, and any project files it identifies as relevant. If AGENTS.md exists, it meaningfully shapes the model’s initial understanding of the repository before implementation begins.

AGENTS.mdA useful AGENTS.md tends to follow a predictable structure. Not every project needs every section, but these are the sections I find most valuable:

Patterns from repeated agent behavior are also worth capturing. If the same mistake or clarification shows up across multiple sessions, that is usually a good sign it belongs in AGENTS.md.

It is equally important to keep the file concise. As the file grows, its guidance becomes harder for the model to apply consistently, and context quality can degrade. In practice, something in the 200–400 line range often works well.

In larger repositories, especially monorepos, nested AGENTS.md files can help keep context local. Agents usually prioritize the nearest file in the directory tree, so the root file can hold global guidance while subprojects define local workflows.

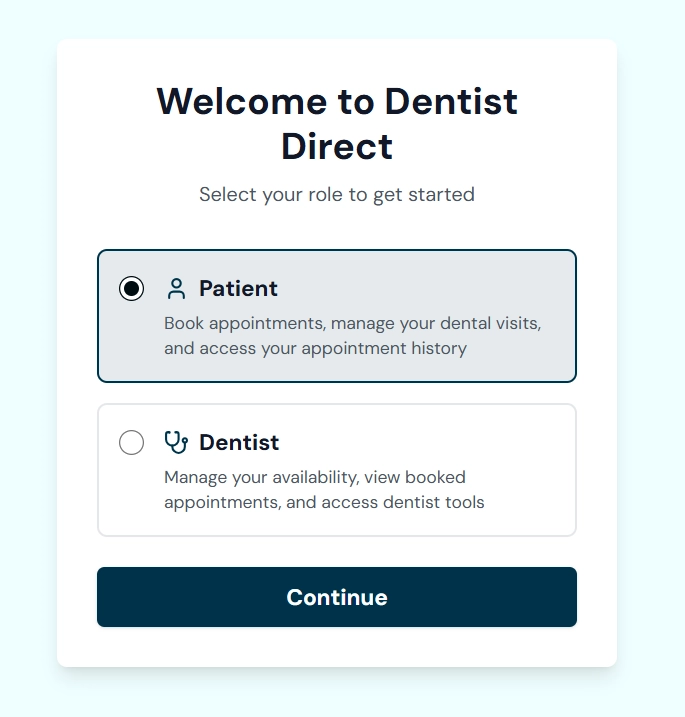

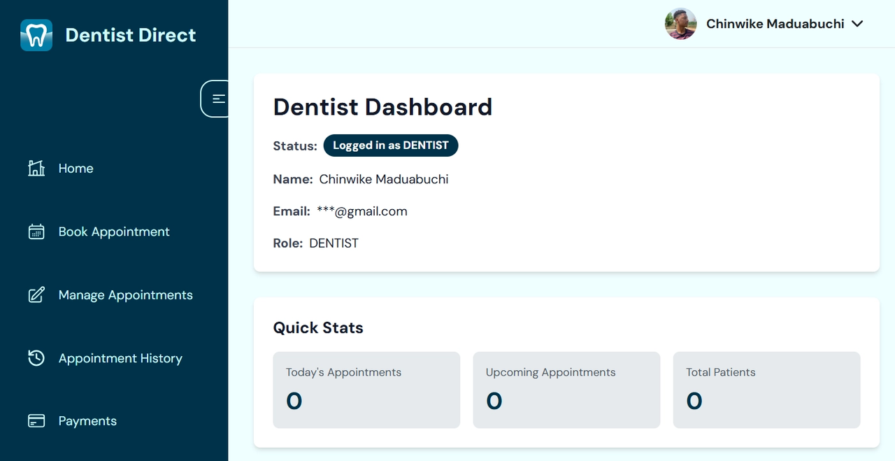

To evaluate both techniques, I ran a set of experiments on a small repository I built some time ago with a friend. It is a Next.js, TypeScript, and Auth.js project for connecting dentists with patients. The application only made it through the patient authentication flow before other priorities took over, which made it a good candidate for testing.

The task was to implement role-based access control (RBAC) so both dentists and patients could log in to separate dashboards with proper authorization. At the start of the experiment, only patients had access to a dashboard.

You can inspect the full experiment runs in the companion experiment repository, which contains the transcripts and token usage data for each session.

Each run used identical starting conditions:

AGENTS.md and skill files where relevantTo compare the runs, I tracked:

The implementation prompt is shown below:

# RBAC Implementation Plan (Onboarding Architecture)

You are working in a Next.js App Router project using Auth.js (v5) and Mongoose/MongoDB.

## Product Context

Dentist Direct is a dental appointment scheduling platform to link patients with professional dentists nearby.

Patients should be able to:

- View available appointment slots

- Book appointments with dentists

- Manage their bookings

Dentists should be able to:

- Manage their availability

- View booked appointments

- Access dentist-specific tools and controls

Currently, the application only supports patients. All users are treated the same after authentication. You must now introduce role-based access control (RBAC) to properly separate dentist and patient experiences.

There are exactly two roles: PATIENT & DENTIST

For now, focus only on the Google OAuth authentication implementation. Authentication and authorization must remain cleanly separated.

## Core Architecture (Required)

- Google OAuth authenticates users only.

- New users are created with role: null.

- After login:

- If role === null → redirect to /onboarding

- If role exists → redirect based on role

- Users without a role must NOT access any other protected route.

- You can enforce RBAC using:

- Next.js Middleware

- Server-side layout protection

- Role must be propagated into JWT and session.

- Do NOT assign role inside the OAuth callback.

## User Model Update

- Add a role field: Enum: PATIENT | DENTIST

- Role Default: null

- Ensure schema validation is correct.

## Onboarding Route (/onboarding)

Create a single-page onboarding flow.

### Access Rules

- Authenticated + role = null → allow

- Authenticated + role exists → redirect to correct dashboard

- Unauthenticated → redirect to /login

### UI Requirements

Must visually match the app’s existing theme.

Provide:

- Clear heading (e.g., “Select Your Role”)

- Role selection (radio or select)

- Short explanation of what each role means

- Submit button

### Submission Logic

- Use a server action or API route.

- Validate authenticated user server-side.

- Update role in MongoDB.

- Redirect:

- PATIENT → /dashboard

- DENTIST → /admin

All updates must be server-side.

## Admin Dashboard (/admin)

Create a simple dentist dashboard.

Requirements:

- Import and reuse the existing sidebar component.

- Render a simple home page inside /admin that clearly shows:

- A message like: “Logged in as DENTIST”

- The user’s name or email (draw inspiration from existing /dashboard)

This page is only for confirming RBAC works DO NOT over-engineer the UI. This route must be fully protected via layout-level server logic.

## Session Configuration (Required)

In Auth.js:

- Add role to JWT in jwt callback.

- Expose role via session.user.role in session callback.

- The role must always be accessible from: `session.user.role`

No client-side database lookups for role.

## Middleware Enforcement

Implement global middleware that:

- Redirects unauthenticated users to /login

- If authenticated AND role is null:

- Allow only /onboarding

- Redirect all other routes to /onboarding

- If authenticated AND role exists:

- Allow access normally

- Prevent users with a role from accessing /onboarding

Middleware acts as a global guard.

## Layout Protection Recap

`/admin` layout

- Only DENTIST allowed

- PATIENT → redirect /dashboard

- Unauthenticated → /login

`/dashboard` layout

- Only PATIENT allowed

- DENTIST → redirect /admin

- Unauthenticated → /login

`/onboarding` layout

- If role exists → redirect appropriately

- If role null → allow

Expected Flow

Google Sign-In

↓

User created (role = null)

↓

Redirect to /onboarding

↓

User selects role

↓

Role saved to DB

↓

Redirect to correct dashboard

Part of that prompt instructed the agent to enforce RBAC with either Next.js middleware or server-side layout protection. On paper, that sounds reasonable. In this repository, though, it collided with the app’s existing session architecture.

The app uses Auth.js with a database session strategy, which means session lookups depend on the database adapter. That becomes relevant because Edge middleware runs in a constrained runtime, and this is exactly the scenario the official Auth.js Edge Compatibility guide warns about. I intentionally left that caveat out of the prompt to see whether the added context would surface the constraint at the right time.

That setup matters because the experiment was not just about whether the agent could finish the task. It was about whether better context would help it avoid an architecture mistake before it crashed.

Setup: Clean repository. No AGENTS.md, no skills. Just the implementation prompt.

The agent’s first move was to launch an exploration subagent to map the repository before writing code. That discovery phase alone consumed roughly 130k tokens and 10 API calls before implementation even started.

Assistant (Build · claude-sonnet-4.5 · 93.8s)

Now let me explore the codebase to understand the current structure before implementing the changes.

Tool: task

Input:

{

"subagent_type": "explore",

"description": "Explore codebase structure",

"prompt": "I need to understand the current project structure for implementing RBAC. Please find and report back:\n\n1. The User model location and current schema\n2. The Auth.js configuration file location and current setup\n3. The current middleware file (if any)\n4. The /dashboard route structure and layout\n5. The sidebar component location that needs to be reused\n6. Any existing TypeScript types for User/Session\n7. The overall app directory structure\n\nUse a \"medium\" thoroughness level. Return the file paths and key details about each."

}

From there, the agent tried multiple enforcement strategies before settling on one. What it landed on was incompatible with the project’s session setup: database sessions combined with middleware in a way that triggered runtime issues. After several debug passes, including a separate Mongoose initialization issue, the feature eventually worked.

The exploration subagent is the clearest symptom of missing context. It is an expensive substitute for the situational awareness that AGENTS.md is supposed to provide upfront.

Total tokens consumed: 2,480,627

Total API calls: 81

Estimated cost: about $2.28

AGENTS.mdSetup: Same repository, same model, same RBAC prompt, but with the following AGENTS.md file added:

Project Overview

Dentist booking platform built with Next.js 14 App Router, TypeScript, and Auth.js (v5).

Agent Guidelines

- Use `pnpm` only; never `npm`.

- Never push to `main`, `master`, or an active dev branch unless explicitly asked; human reviews/pushes.

- Do not run build commands unless explicitly instructed (Ralph loop cases).

- Never add yourself as author, co-author, or committer.

- If a critical project change happens (new feature, auth flow, schema, major route), update `AGENTS.md` in the same task.

- In `AGENTS.md` updates, prioritize concision over grammar; short + clear wins.

Project Commands

- `pnpm dev`: run Next.js dev server

- `pnpm turbo`: run dev server with turbo

- `pnpm build`: build Next.js application

## Technology Stack

- **Package Manager**: pnpm

- **Framework**: Next.js 14 (App Router)

- **Language**: TypeScript

- **Authentication**: Auth.js v5 (`next-auth` 5.0.0-beta.15)

- **Database**: MongoDB

- **Data Modeling**: Mongoose

- **State Management (Client)**: Zustand

- **Styling**: Tailwind CSS

Folder Architecture

```txt

/

├── auth.ts # Auth.js v5 configuration

├── app/

│ ├── models/

│ │ └── userModel.ts # Mongoose user schema

│ ├── api/auth/[...nextauth]/route.ts

│ ├── dashboard/ # Protected dashboard routes

│ ├── (auth)/ # Auth pages

│ └── api/ # API routes

├── lib/

│ ├── mongodb.ts # MongoDB client connection

│ ├── connectdb.ts # Database connection helper

│ └── sendVerificationRequest.ts

└── components/ # React components

```

Authentication

- Library: Auth.js v5 (`next-auth` 5.0.0-beta.15)

- Config: `auth.ts` (root)

- Providers: Google, GitHub, Email

- Session Strategy: Database (via MongoDBAdapter)

- Session Duration: 5 days

Data Layer

- Database: MongoDB

- Connection: `lib/mongodb.ts`

- ORM: Mongoose

User Model

Defined at `app/models/userModel.ts`:

```ts

interface UserDocument {

firstname: string

lastname: string

email: string

password?: string

provider?: string

appointments?: AppointmentDocument[]

}

```

Code Style

- **Imports**: Use `@/*` path alias for project imports

- **Types**: Prefer `type` over `interface`; use TypeScript strict mode

- **Components**: Use functional components with typed props

- **Formatting**: Prettier with default config; double quotes for strings

- **Class Names**: Use `cn()` utility from `@/lib/utils`

- **Variants**: Use `class-variance-authority` for component variants

- **Naming**: PascalCase for components, camelCase for functions, kebab-case for files

- **Exports**: Named exports for components and utilities

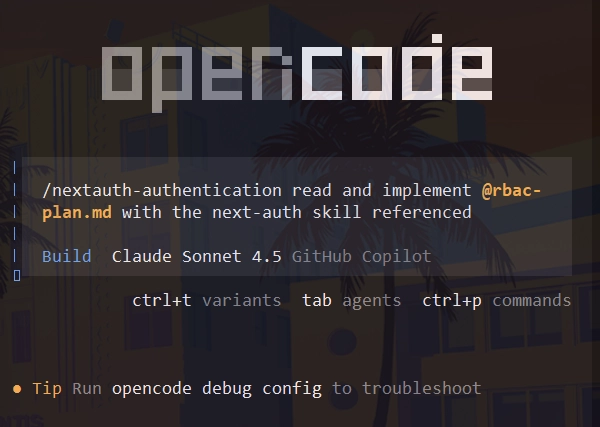

The difference was immediate. Instead of launching an exploration subagent, the agent began with targeted reads of userModel.ts and auth.ts, which were the two most relevant files for the task.

User:

read and implement @rbac-plan.md

Assistant (Build · claude-sonnet-4.5 · 11.3s)

I'll implement the RBAC system with onboarding architecture as specified. Let me start by creating a comprehensive todo list to track this implementation.

Tool: todowrite

Input:

{

"todos": [

{

"content": "Update User model to add role field (PATIENT | DENTIST | null)",

"status": "pending",

"priority": "high"

}

]

}

Because the file already exposed the user model location, auth config, package manager, and folder layout, the agent skipped the discovery phase and moved straight into implementation. It still hit the Edge runtime incompatibility later and needed one debug iteration to recover, but the run remained much cleaner than the no-context session.

One interesting side effect was UI quality. This run produced the best onboarding page and admin dashboard of the three sessions, despite using the same model and task prompt.

AGENTS.md removed the most expensive part of the session: broad discovery. The agent read only what it needed, then started implementing.

Total tokens consumed: 1,175,645

Total API calls: 42

Debug iterations: 1

Estimated cost: about $1.08

This was the best balance of cost, speed, and reliability across the three experiments.

Skills can be configured either per project or globally. Project-level skills live inside the repository and are versioned with the codebase. Global skills live on your machine and are available across projects.

Project-level skills are the right fit for workflows tied tightly to one codebase, such as a migration process, a component pattern, or project-specific testing rules. Global skills work better for broader practices, such as auth patterns, code review checklists, or documentation workflows.

Different editors also support different discovery paths. For example:

# Claude Code / Claude-compatible editors Project: .claude/skills/<name>/SKILL.md Global: ~/.claude/skills/<name>/SKILL.md # OpenCode Project: .opencode/skills/<name>/SKILL.md Global: ~/.config/opencode/skills/<name>/SKILL.md # Gemini CLI / Gemini-compatible setups Project: .gemini/skills/<name>/SKILL.md Global: ~/.gemini/skills/<name>/SKILL.md

In practice, I recommend standardizing around an agents folder when the tooling allows it. It is the most portable naming convention and makes skills easier to carry across editors and runtimes.

SKILL.md, but keep the instruction file itself focusedFor this experiment, I used a nextauth-authentication skill that documented session strategies, provider setup, TypeScript extensions, and the split-config pattern needed when database sessions interact with Edge middleware.

Setup: Same repository, same model, same RBAC prompt, plus the AGENTS.md file from Experiment 2. I also installed the nextauth-authentication skill globally.

npx skills add https://github.com/mindrally/skills --skill nextauth-authentication

Like the AGENTS.md session, this run did not need an exploration subagent. It started with targeted reads of auth.ts and userModel.ts, then moved into implementation.

The agent built the onboarding flow, admin dashboard, and middleware. But it then made the critical mistake the skill was supposed to prevent: it imported and called auth() from @/auth inside middleware, pulling database-session logic into an Edge-sensitive path. That produced the runtime failure the experiment was designed to expose.

After I reported the error, the agent did reference the skill and apply the split-config pattern. It created an Edge-compatible auth.config.ts and updated middleware to use it. But that fix did not stick. Later in the session, the implementation shifted again: middleware was simplified to handle only public-route logic, and the heavier auth enforcement moved into server-side layouts instead.

That eventually avoided the Edge runtime conflict, but only after more iteration. Two more bugs then surfaced:

models.User instead of mongoose.models.UserBoth were fixed, and the feature worked by the end of the session. But the overall path was far less efficient than the AGENTS.md-only run.

This was the most interesting part of the experiment. The skill contained the right guidance. It just did not get applied proactively.

I even reran the task with a stronger instruction telling the agent to treat the skill as authoritative, list the constraints first, and explain how its design satisfied them before coding. The agent did list the Edge incompatibility that time. It still made the same implementation mistake later.

That points to a real limitation of skills: they are not enforcement. They are context. And context still has to be synthesized and applied by the model.

The skill improved the eventual architecture, but it did not guarantee good early decisions. In fact, it produced the most sophisticated final implementation at the highest cost.

Total tokens consumed: 6,013,893

Total API calls: 108

Debug iterations: 3

Estimated cost: about $5.57

Skills can increase architectural quality, but they do not reliably prevent high-cost errors unless the model consistently applies them at the right time.

AGENTS.md vs. agent skills: Which should you use?If you are deciding where to start, the experiments point to a practical answer.

Use AGENTS.md first when you want a lightweight, persistent way to reduce discovery cost and improve adherence to project conventions. It gives you the best return for the least effort.

Add skills when you have repeatable workflows or high-stakes patterns that benefit from deeper task-specific guidance. They are most useful when the workflow is narrow, important, and likely to recur across sessions.

Avoid treating skills as a substitute for repository-level clarity. The AGENTS.md run was the cheapest and cleanest because it improved the agent’s first decisions. The skills run produced the strongest final architecture, but only after expensive recovery cycles.

So the practical sequence is:

AGENTS.mdThis also lines up with a broader pattern in agentic development: faster generation does not remove the need for better control and review. LogRocket’s analysis of why AI coding tools shift the real bottleneck to review gets at the same underlying issue from a different angle.

Before getting into the raw metrics, the clearest way to read the experiments is by decision profile:

| Approach | What improved most | Main weakness | Best fit |

|---|---|---|---|

| No added context | None beyond a clean baseline for comparison | High discovery cost and more architectural thrash | Testing how an agent behaves from scratch |

AGENTS.md only |

Speed, cost efficiency, and implementation stability | Still depends on the model to catch deeper architecture constraints | Most day-to-day repository work |

AGENTS.md plus skills |

Final architectural sophistication | Highest cost and more recovery cycles when the skill is applied late | Narrow, repeatable, high-stakes workflows |

The raw metrics reinforce that pattern:

| Metric | No context | With AGENTS.md |

With skills |

|---|---|---|---|

| Total tokens | 2,480,627 | 1,175,645 | 6,013,893 |

| API calls | 81 | 42 | 108 |

| File reads | 15 | 13 | 25 |

| New files written | 9 | 6 | 10 |

| File edits | 17 | 6 | 23 |

| Debug iterations | 3+ | 1 | 3 |

| First implementation appeared | Turn 5 | Turn 12 | Turn 1 |

| Final code sophistication | Low | Medium | High |

| Estimated cost | $2.28 | ~$1.08 | ~$5.57 |

The “first implementation appeared” row is worth reading carefully. A lower number does not automatically mean a better run. The skills session started writing code on Turn 1, but still needed multiple debug cycles. The AGENTS.md session wrote later, but the implementation path was much more stable.

The numbers show us a pretty straightforward result:

AGENTS.md delivered the best return on investment: it cut token use by more than half relative to the no-context run and kept debugging limitedAGENTS.md and skillsAGENTS.md can be nearly as harmful as no file at all because important constraints get buried in noise.env files into logs or prompts if you do not scope access carefullyOne useful discipline is to treat both AGENTS.md and skills as production artifacts. They should be versioned, reviewed, and pruned just like code.

AGENTS.md files and agent skills help coding agents move beyond generic code generation and toward reliable, project-aware execution. In practice, they improve how agents understand repository structure, follow team conventions, respect architectural constraints, and complete recurring workflows with less drift.

The experiments in this article also make an important distinction: these tools are not interchangeable. AGENTS.md is the strongest default because it improves the beginning of the session, when agents are trying to understand the codebase and when costly mistakes often start. Agent skills are more effective as targeted enhancements for workflows that are narrow, repeatable, and valuable enough to justify the added context.

For most teams, the best approach is to start with a concise, high-signal AGENTS.md that defines architecture, constraints, conventions, and expectations clearly. Once that foundation is working, add a small number of tightly scoped agent skills for tasks where coding agents repeatedly lose time, make the same mistakes, or need deeper procedural guidance.

The teams that get the most value from agentic coding will not be the ones with the longest prompts or the most documentation. They will be the ones that practice deliberate context engineering, giving coding agents the right information at the right level of specificity so they can produce faster, more consistent, and more reliable results.

Learn how to build advanced Next.js forms with rule engines, client-side previews, Server Actions, and server-validated form logic.

AI is reshaping engineering teams emotionally as well as technically. A CTO shares insights on fear, trust, burnout, identity, and leading through AI change.

Learn what context rot is, why AI agent sessions degrade over time, and how to fix it with compaction, prompt anchoring, context files, plan files, and RAG.

Learn about TypeScript v6’s breaking changes, new ES2025 features, and deprecated options. A complete migration guide from v5 to prepare for v7.

Hey there, want to help make our blog better?

Join LogRocket’s Content Advisory Board. You’ll help inform the type of content we create and get access to exclusive meetups, social accreditation, and swag.

Sign up now